Redesigning Mailchimp’s A/B Testing Plaform

Led the redesign of Mailchimp’s experimentation platform to help marketers run effective A/B tests.

The new experience increased experimentation adoption by 140% and repeat usage by 220%.

Role

Senior product designer

Team

PM, Engineers, Data Science

Timeline

8 months

key CONTRIBUTIONS

End-to-End Product Ownership: Solely spearheaded the design strategy and execution for Mailchimp’s experimentation platform, transforming a complex technical capability into a user-centric growth engine.

Strategic Alignment & Buy-in: Partnered with PM, Engineering, and Data Science leadership to diagnose systemic adoption barriers (0.34% campaign usage) and secured executive approval for a multi-year redesign roadmap.

Scalable Design Systems: Developed and integrated a cross-channel experimentation framework into the core design system, enabling consistent A/B testing patterns across multiple platform area teams.

AI Choice Architecture: Defined the interaction principles for generative AI within the workflow, balancing user autonomy with curated options to mitigate choice paralysis and cognitive load.

Data-Informed Direction: Utilized in-product A/B testing and quick usability testing to drive product decision making and inform design direction.

Problem

Through data analysis in Looker, I found that although experimentation is critical to marketing performance, only 0.34% of Mailchimp campaigns used A/B testing.

95% of users had not used A/B testing in Mailchimp

Interviews revealed marketers avoided the feature because the setup process was misaligned and limiting.

This created a competitive risk as users churned to platforms with easier experimentation workflows.

"The biggest difference between Mailchimp and (competitor), and one of the main reasons we switched, was the ability to A/B test automations”

Goal

Improve experimentation adoption through an increase in exploration, usage, and retention

Increase monthly usage to align with business churn risk reduction metrics

old experience

Below are key screens in the old workflow

Old workflow had low discoverability & usage rates

Research & Key Insights

Methods

Each stage of the process required different methodologies that required aligning goals, timelines, and effectiveness.

20 user interviews

Analytics review

Competitive research

Concept testing

Usability testing

Insight #1

Marketers' A/B testing needs evolve with their marketing capabilities

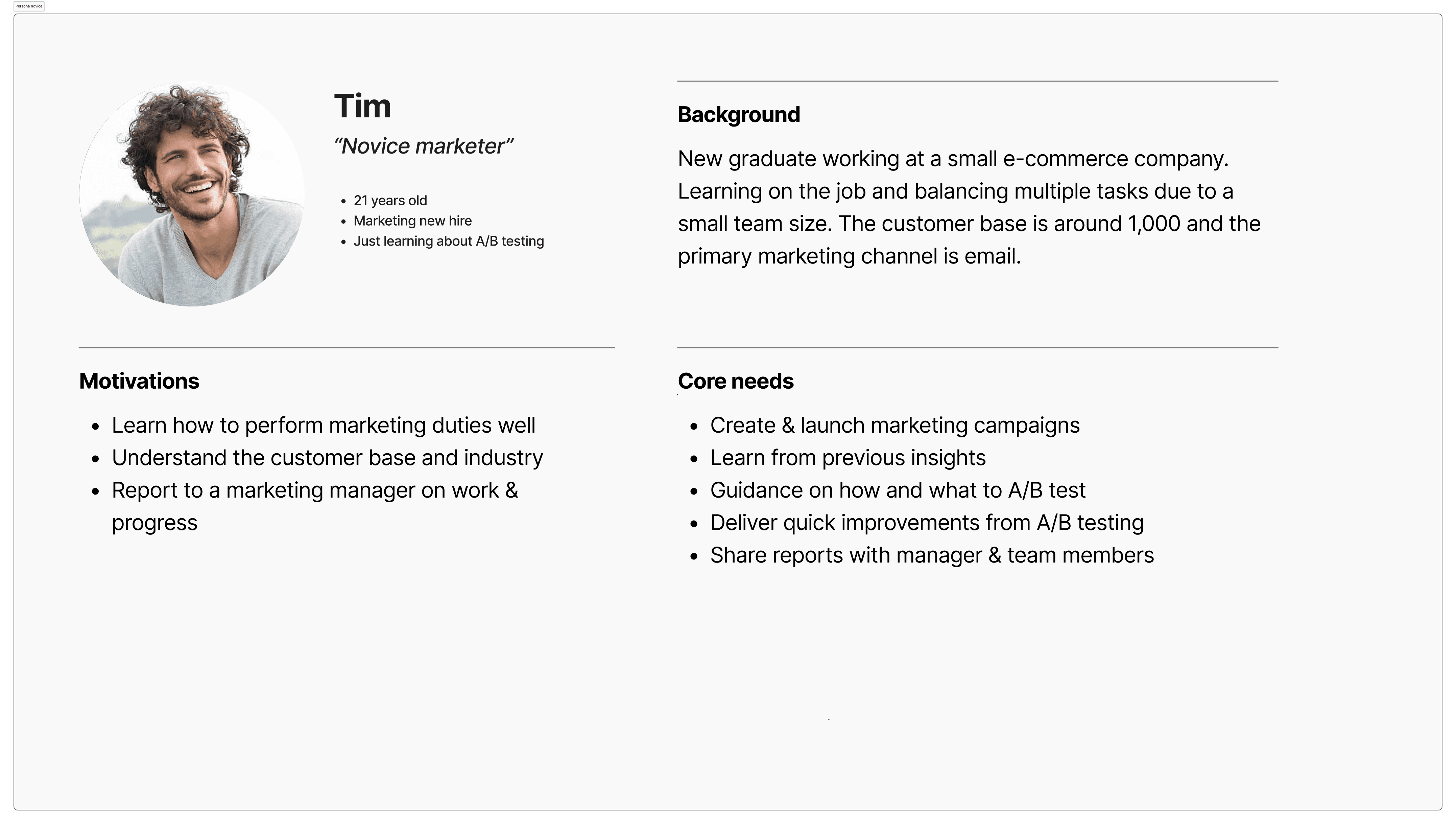

Early career marketers need more guidance & quick, simple tests to learn about their general audience, & advanced marketers need the ability to test more specific hypotheses.

process

User interviews were conducted with marketers of varying experience & company sizes

Personas were created to guide future designs

Design implications

Balance a feature set & layout that allows for simple & advanced tests

Personas of novice & advanced marketers

Insight #2

Marketers create their base campaign before creating test variations

Users were observed to create base campaigns first to meet their core campaign needs, before adding test variations to test hypotheses.

process

Contextual inquiry showed users creating campaigns completely first, and then adding variants

In product A/B testing revealed that the test creation entry point needed to be placed after a user creates a campaign

Journey map was created to outline the user's process

Design implications

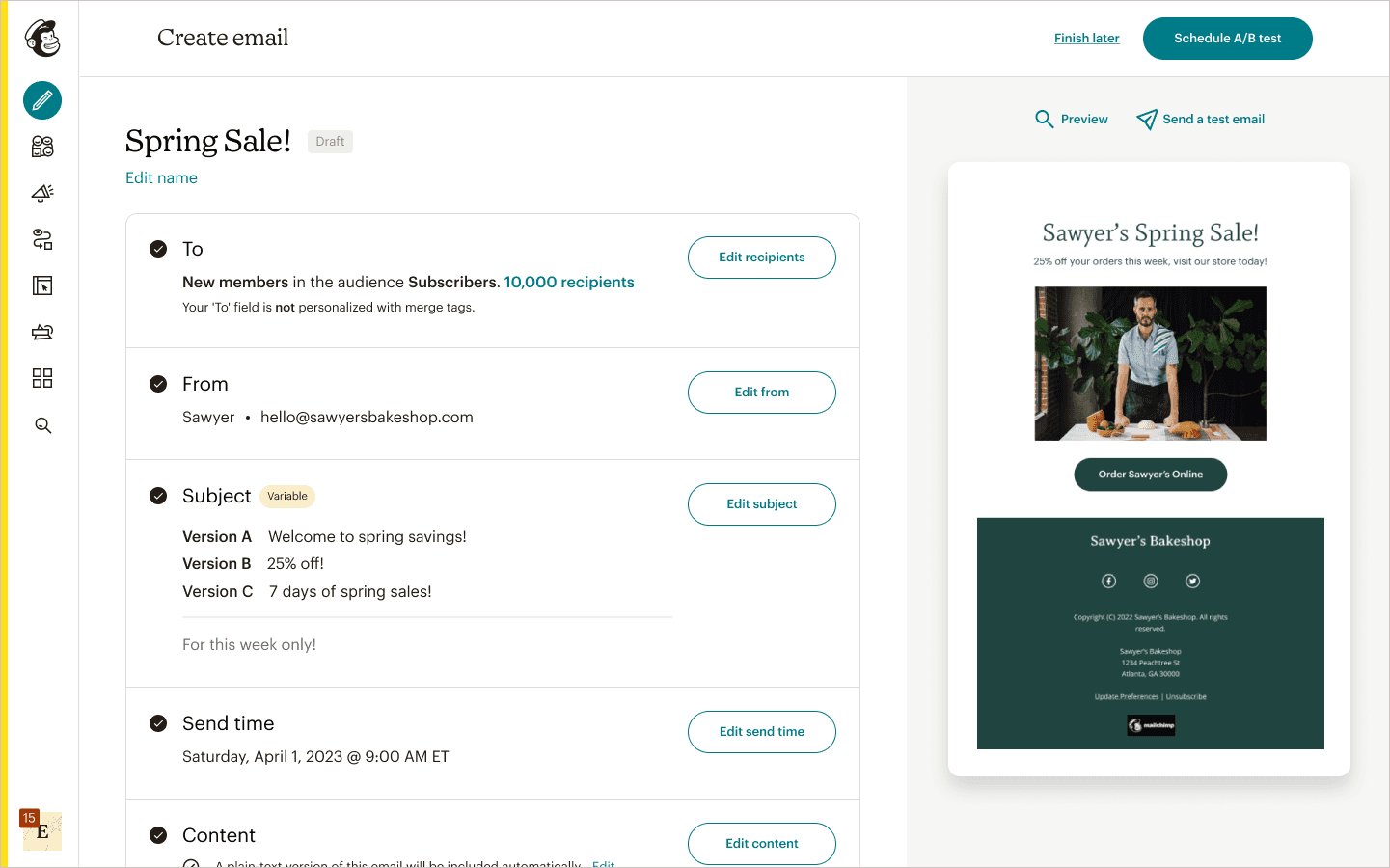

The test creation entry point was placed in context and test details would be set after the base campaign was created

Journey map of the process

Insight #3

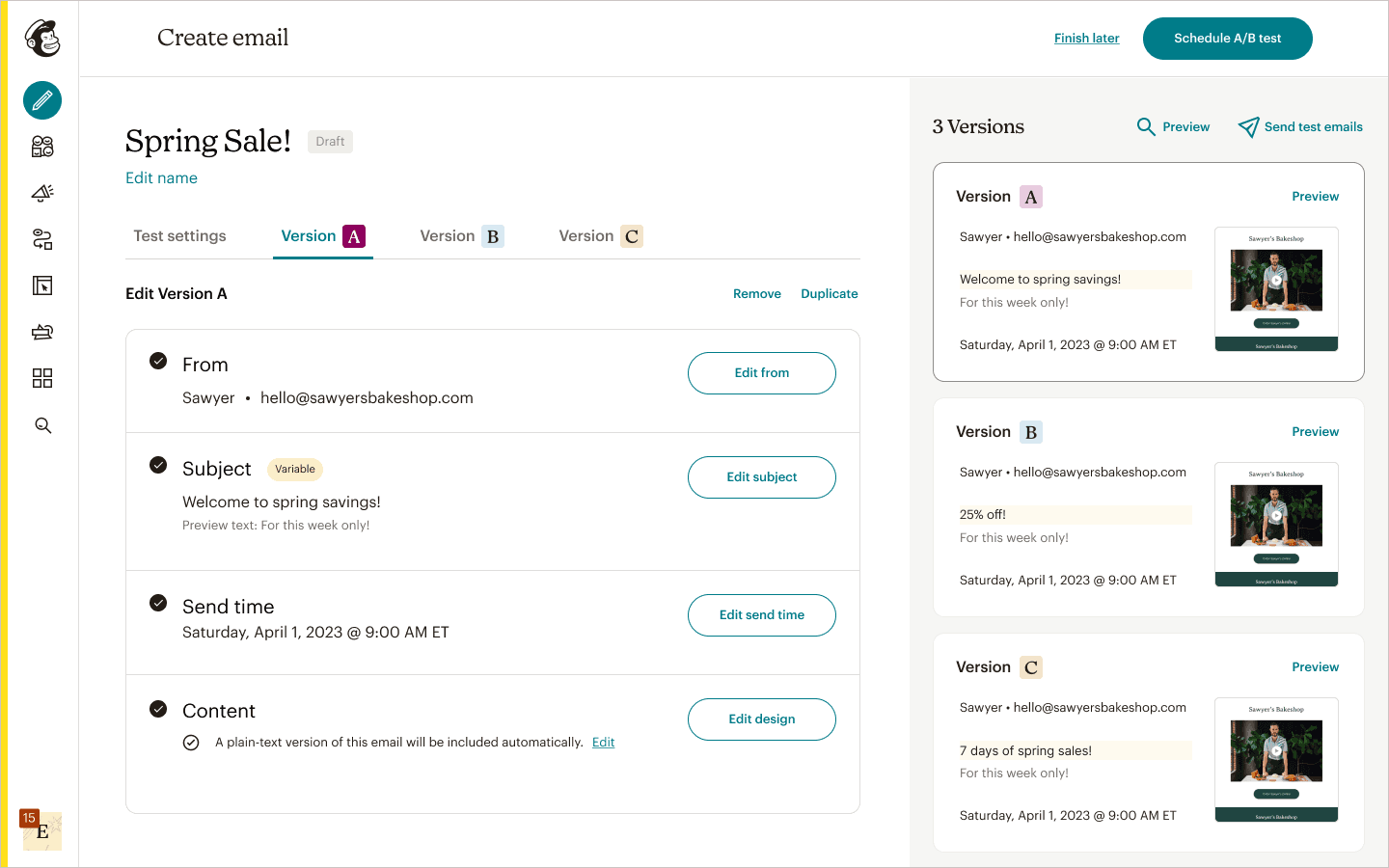

Marketers view test variations as independent from each other

Users have a mental model of sending out individual emails that can be freely edited, which disproved the previous model of users sending out a single email with variants only in a specific field.

process

User interviews revealed flaws in the current mental model & what an accurate model was

Concept testing verified this with tangible workflows

Design implications

Allow test emails & assets to be independently edited

Single email with 3 field variants

3 emails with field variants

Insight #4

Marketers need approachable language to explore testing

Users were confused with terms such as 'Multivariate testing' which resulted in hesitancy in exploring testing.

process

User interviews, card sorting, & surveys showed that simpler terms provided clarity about A/B testing

Glossary of terms

Design implications

Branding of the product and content terms were more intuitive & approachable

Key Design Decisions

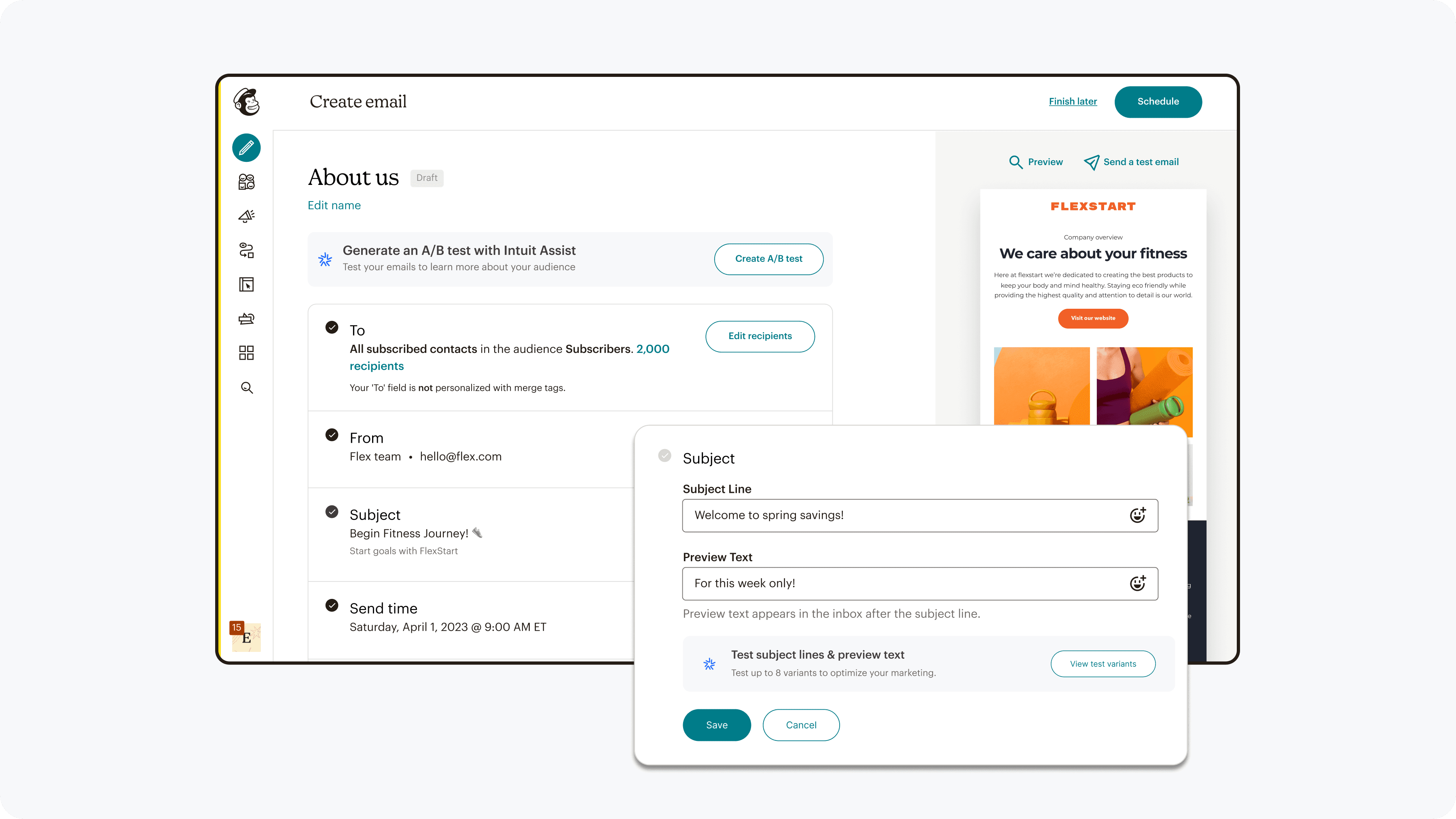

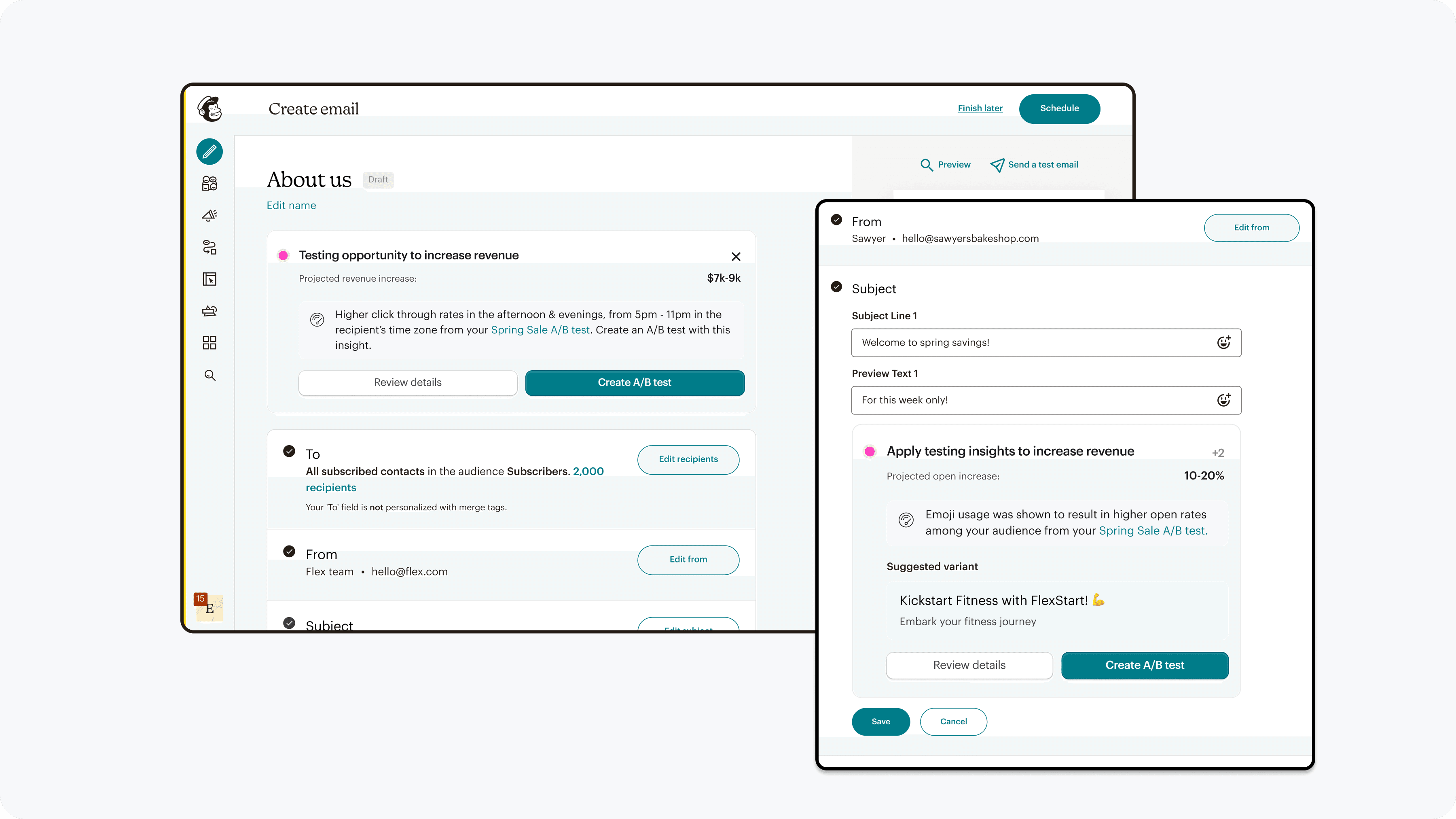

Decision #1

Entry point placed by the field to tested

Placement of the test CTA was determined by A/B testing in product

Options

We experimented with placing the CTA earlier in the current funnel, as well as various spots of the campaign creation page.

decision making

The CTA was placed by the field to be tested through

Sustained exploration rate increase through in product A/B testing

Matches user research insights on when users want to create a test

Testing various entry point locations

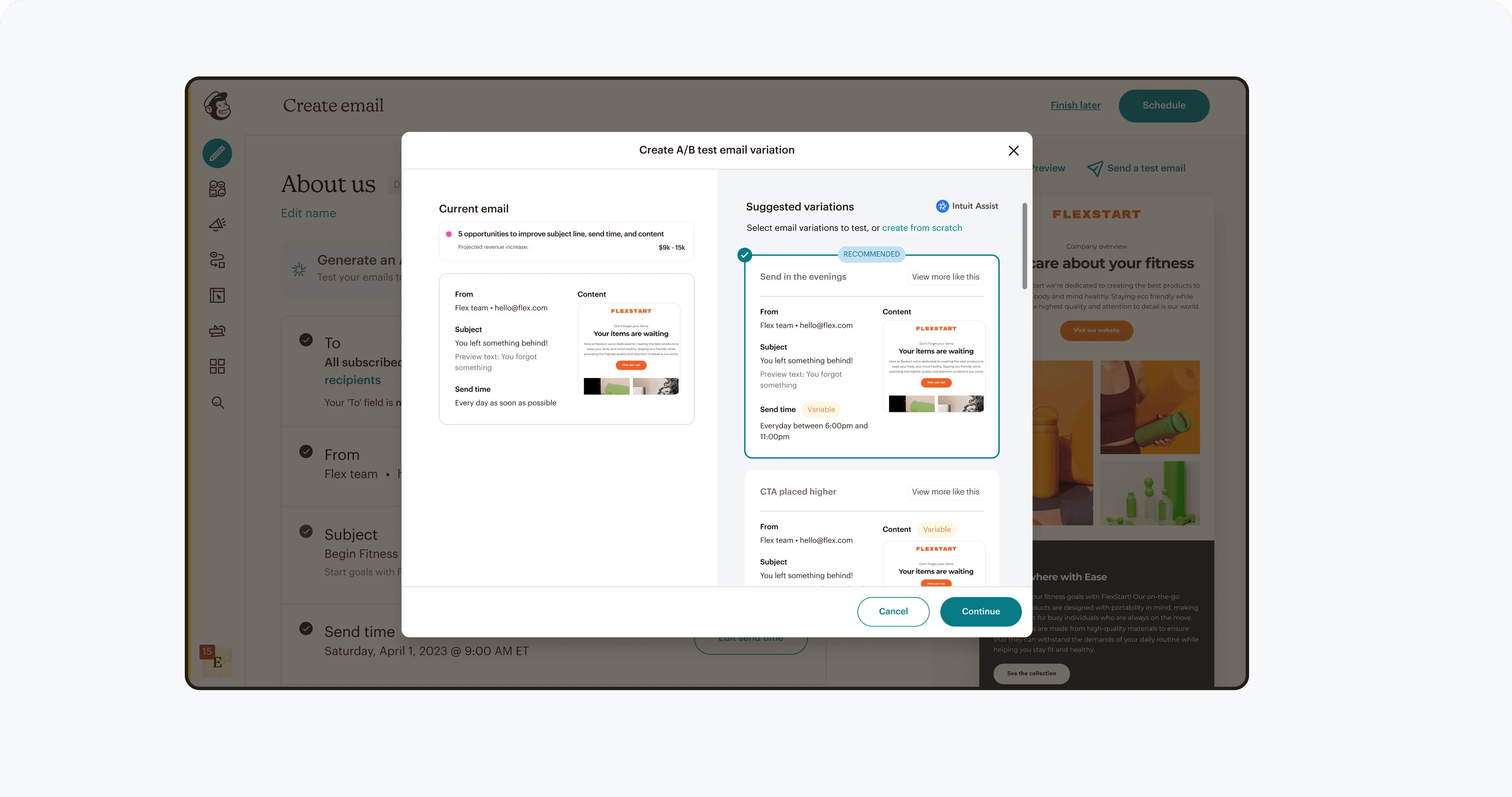

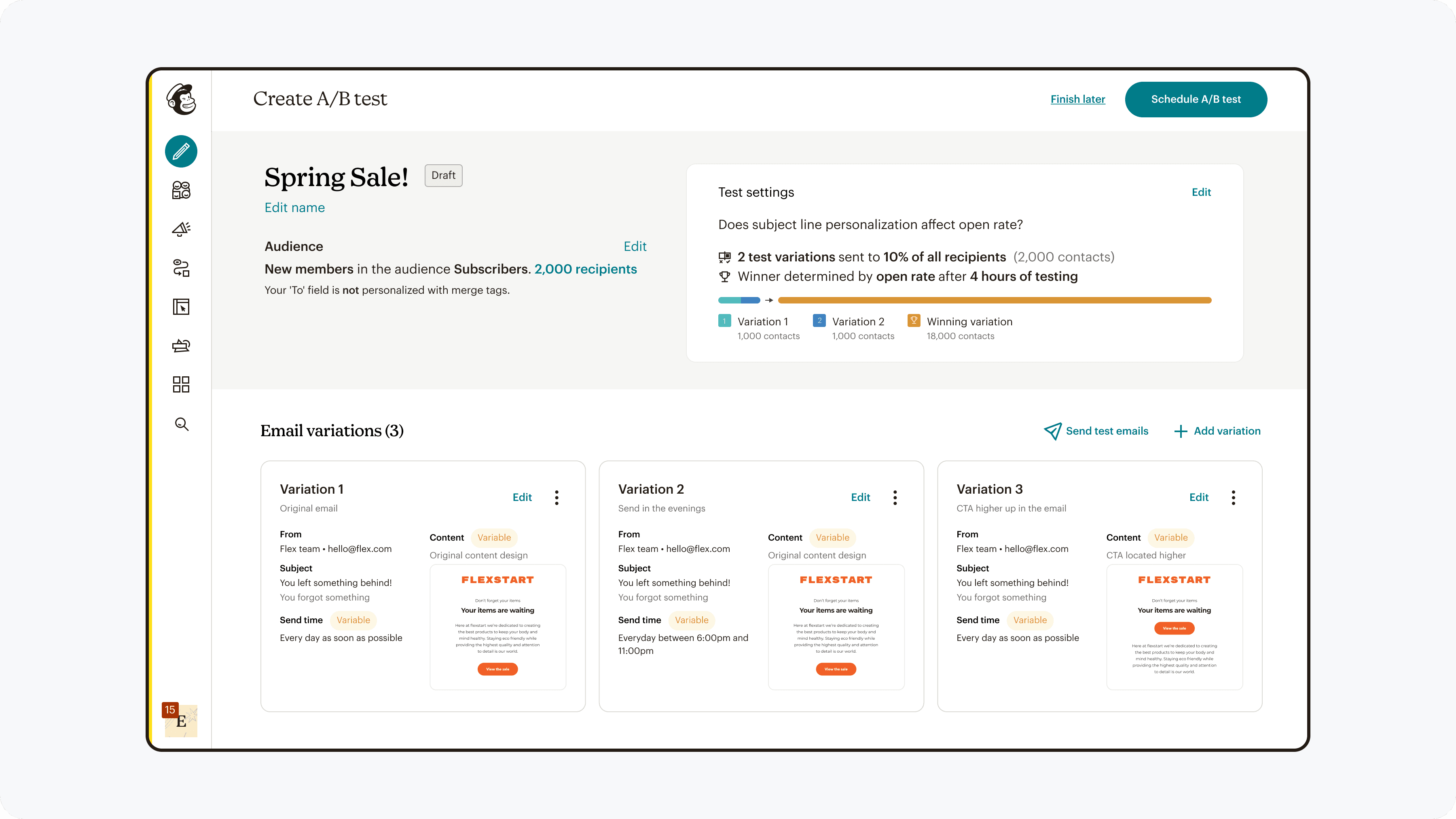

Decision #2

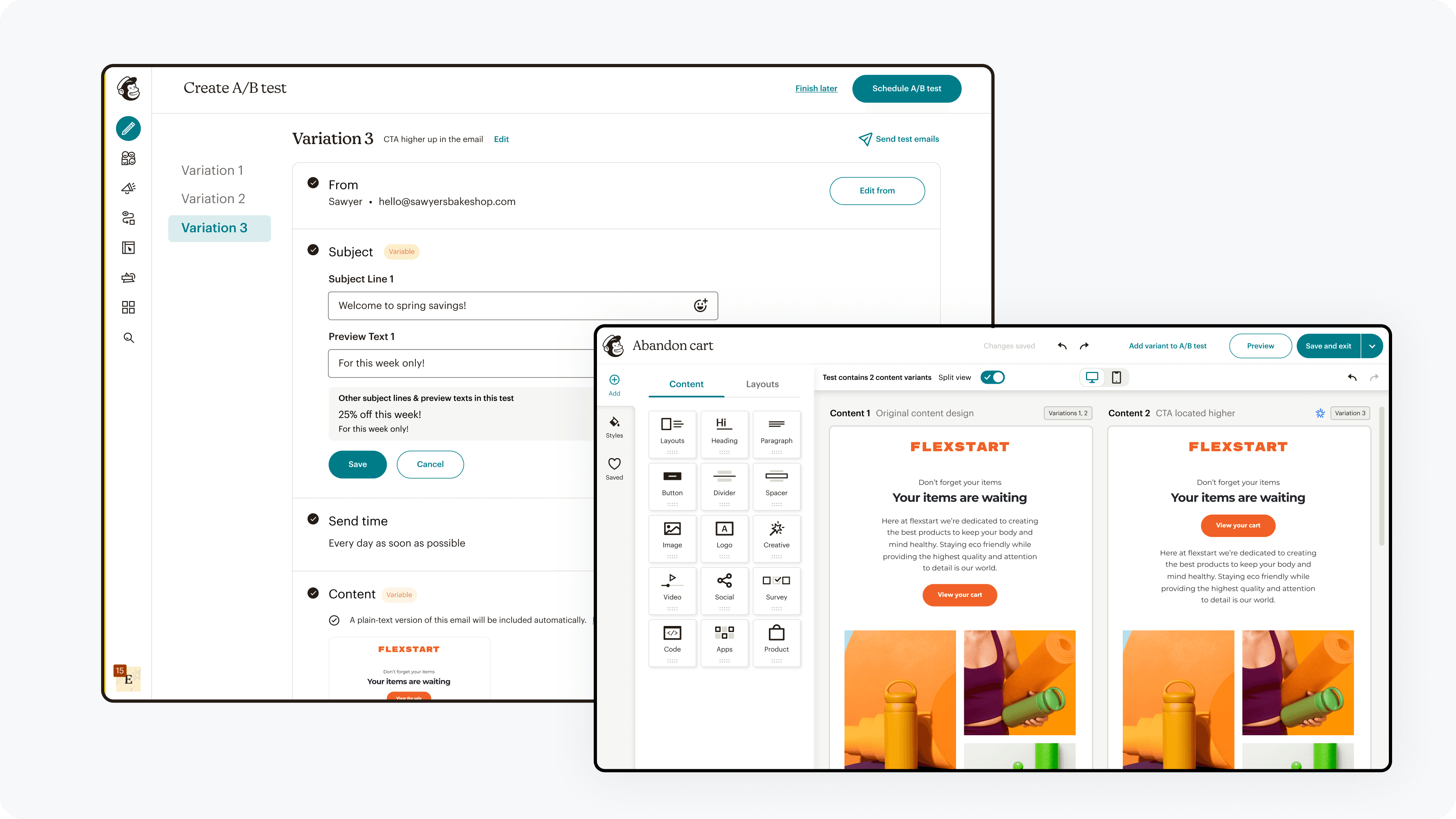

Hybrid workflow between campaign & A/B test creation

I designed & validated an intuitive workflow that balanced existing creation workflows with A/B testing

Options

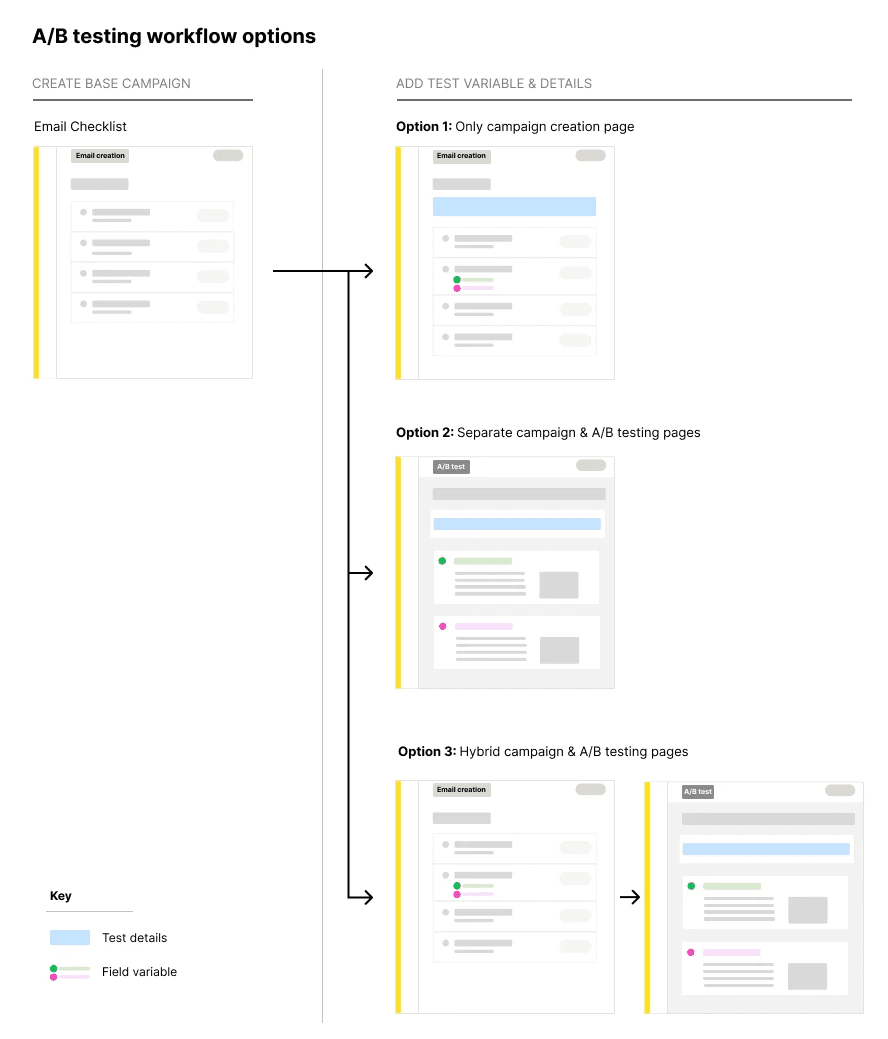

There were 3 options on how to integrate the A/B testing creation experience

Single page experience: Add A/B testing features to the campaign page

Two separate experiences: Keep campaign creation page as is, & add A/B test actions on a separate page

Hybrid experience: Add A/B testing variation creation to the campaign creation page, and add A/B test detail creation on a separate page

3 different workflow options

decision making

The hybrid approach was selected through

Moderated interviews revealed distinct stage of base creation, test variation creation, and review

Constant validation check-in's with subject matter experts (internal marketers)

Concept testing showed quantitatively it was the most intuitive

Maintained learned creation patterns

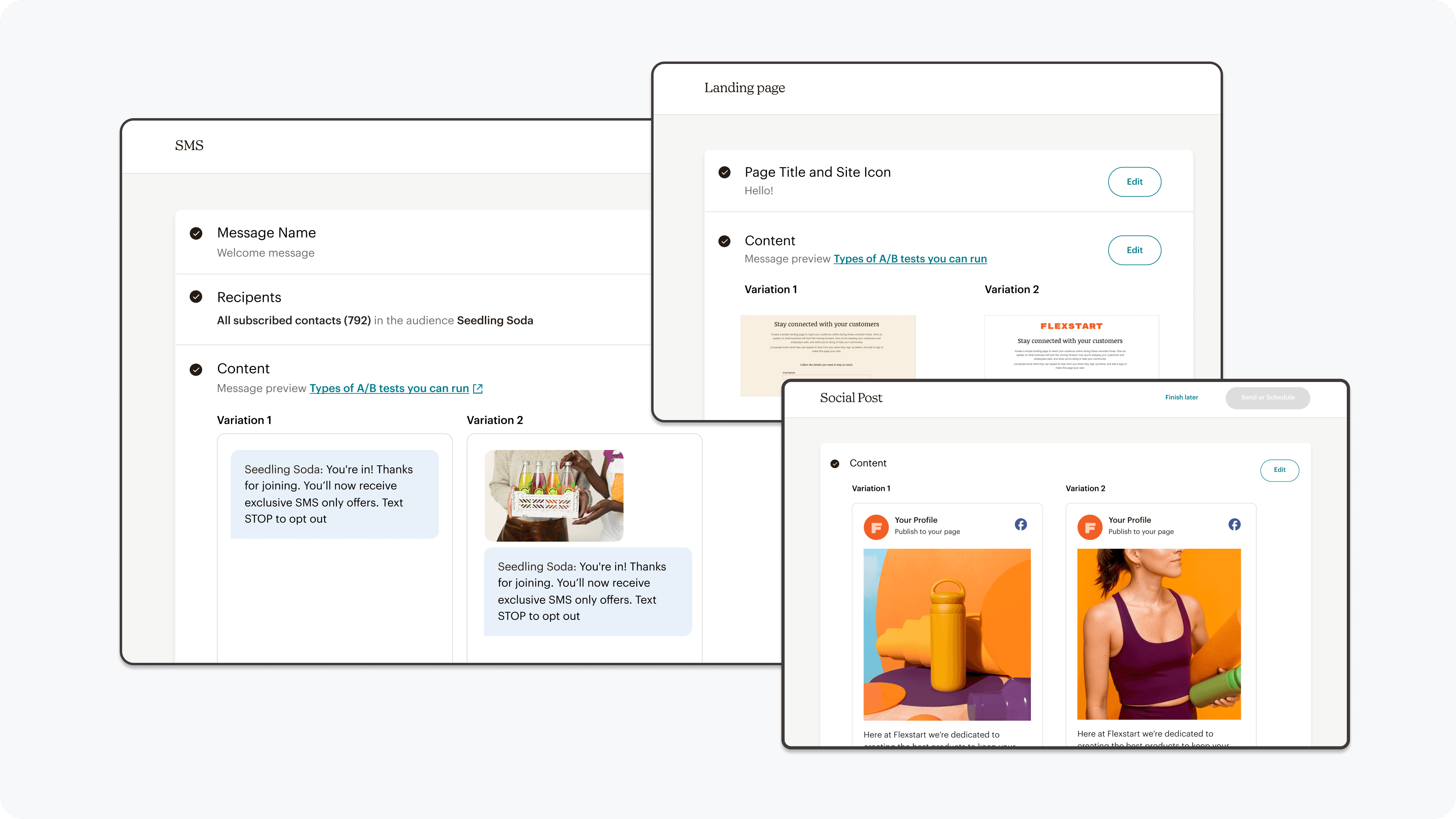

Scaled across all campaign creation experiences (email, SMS, forms, landing pages, automations)

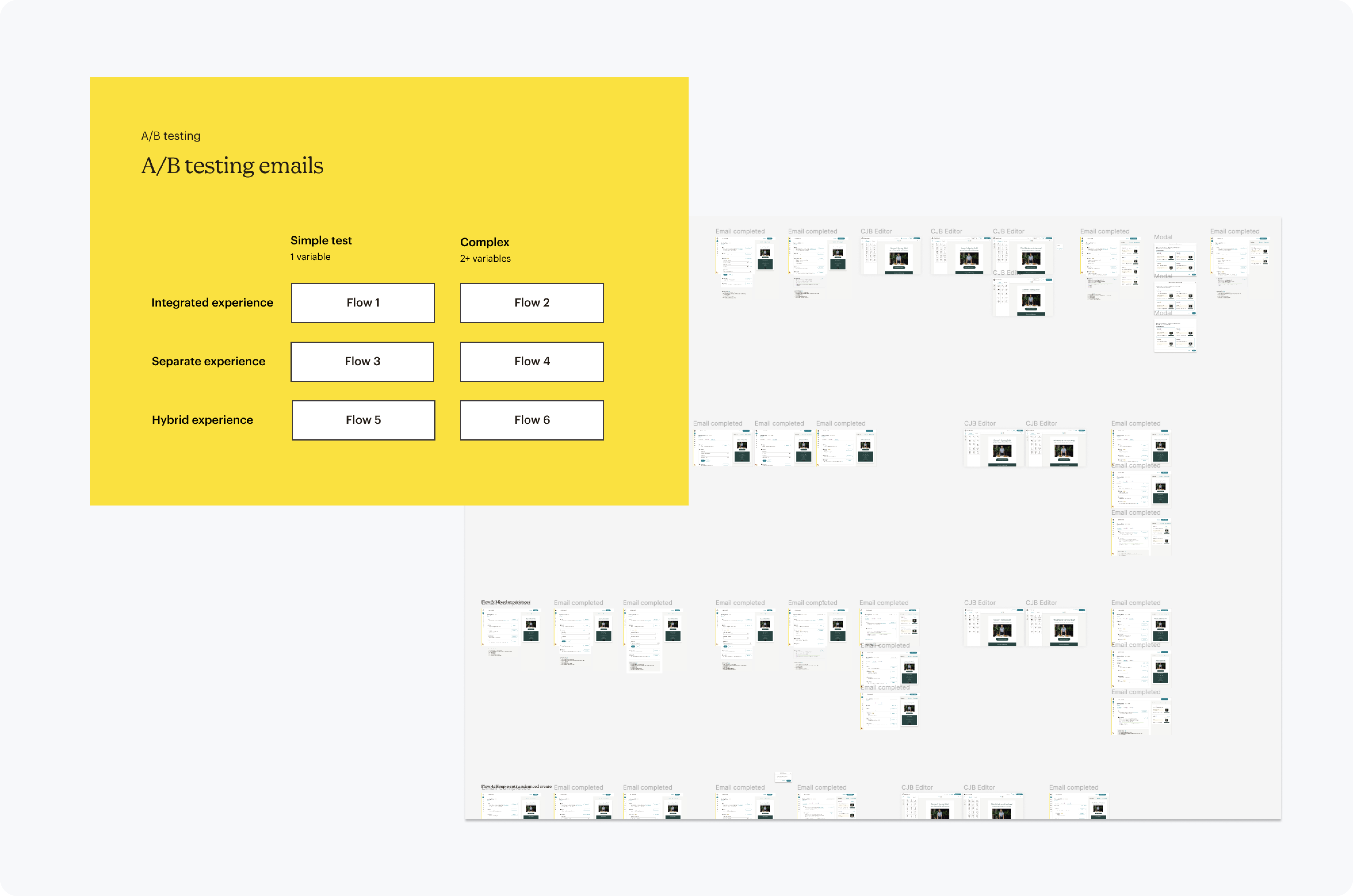

Usability testing workflows across different use cases

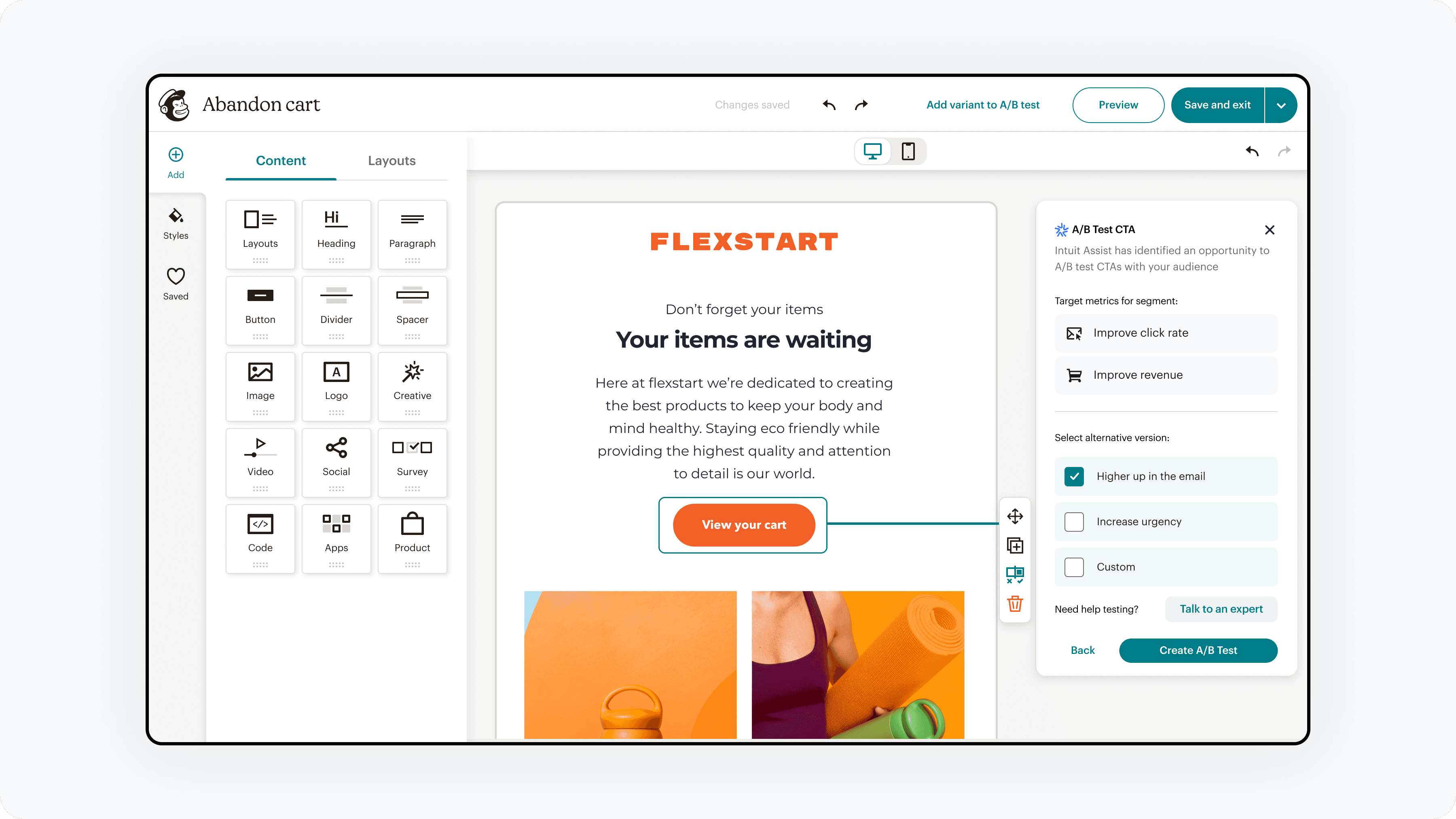

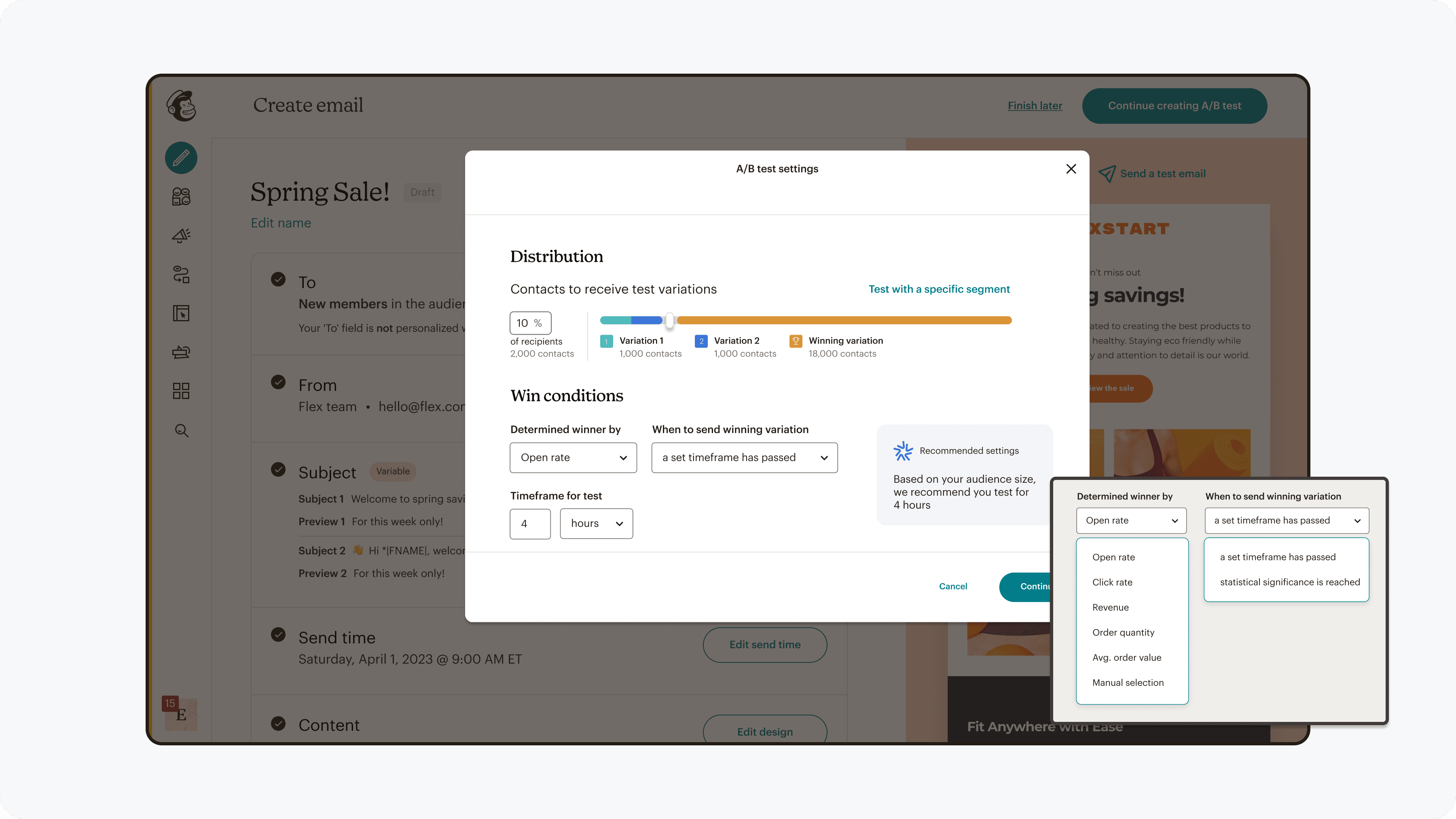

Decision #3

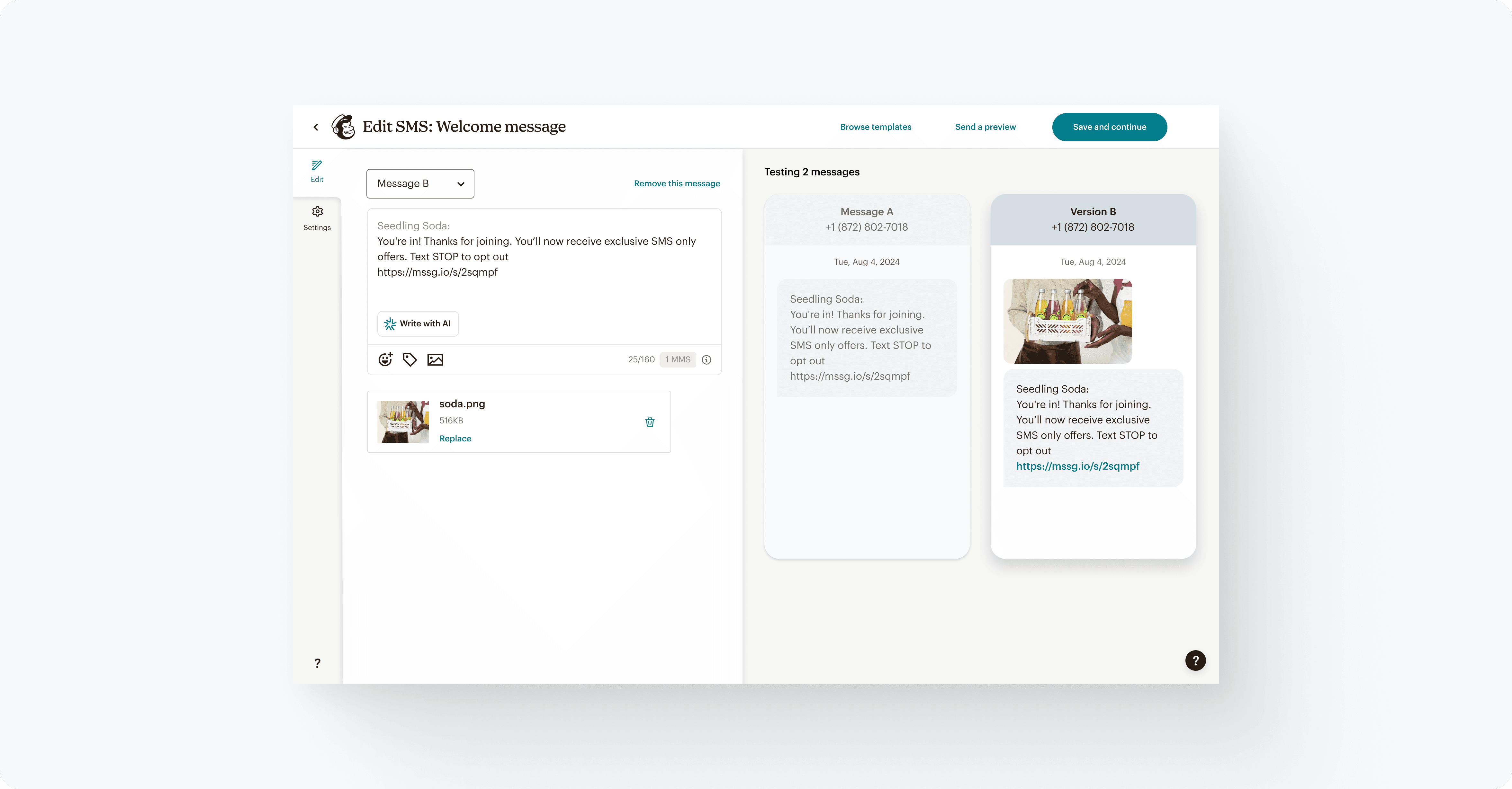

Include comparison for test variation creation & review

Users need to compare for intentional creation of test hypotheses, and for confidence before sending.

Options

Including comparison would add visual density, though seemed to be a user need in user interviews.

decision making

Comparison was determined as essential through

Contextual inquiry showing users switching constantly between test variations

Usability testing confirming this pattern

A/B testing this pattern in the SMS test workflow proved to be useful

Providing a way to quickly compare versions

Key Product Tradeoffs

Building experimentation tools involved navigating multiple product, engineering, and user constraints. These tradeoffs shaped how the feature was delivered.

Tradeoff #1

Launching on SMS before Email

Email was the core channel where A/B testing would have the largest impact, but it also had the most complex infrastructure and a longer development roadmap.

Tension

The product vision was to launch experimentation directly within email campaigns, but engineering timelines and the email team’s roadmap made this difficult to deliver quickly.

Decision

To reduce risk and learn faster, we launched the experimentation workflow first on SMS campaigns. This allowed us to validate the core workflow and user behavior before expanding to email.

outcome

Launching on SMS provided early signals on how marketers structured tests and interacted with results, which helped inform future iterations before scaling to the larger email ecosystem.

Tradeoff #2

Reducing experiment send time

Early prototypes required 3 hours to send a test campaign and collect results.

Tension

User interviews revealed that marketers expected experimentation to fit within a much shorter campaign preparation window.

Decision

After sharing these findings with engineering leadership, the team ran an engineering spike to explore performance improvements.

outcome

The team reduced the send window from 3 hours to ~30 minutes, making experimentation practical within typical campaign workflows.

Tradeoff #3

Limiting initial win criteria

Marketers typically evaluate tests using metrics like open rate, click rate, and revenue.

Tension

Supporting multiple automated win criteria at launch required complex backend data pipelines that would significantly delay the release.

Decision

Instead of delaying the feature, we launched with a simpler model where users could manually review results and select a winning variant.

outcome

This allowed marketers to run experiments immediately while giving the team time to build more advanced automated win criteria in later iterations.

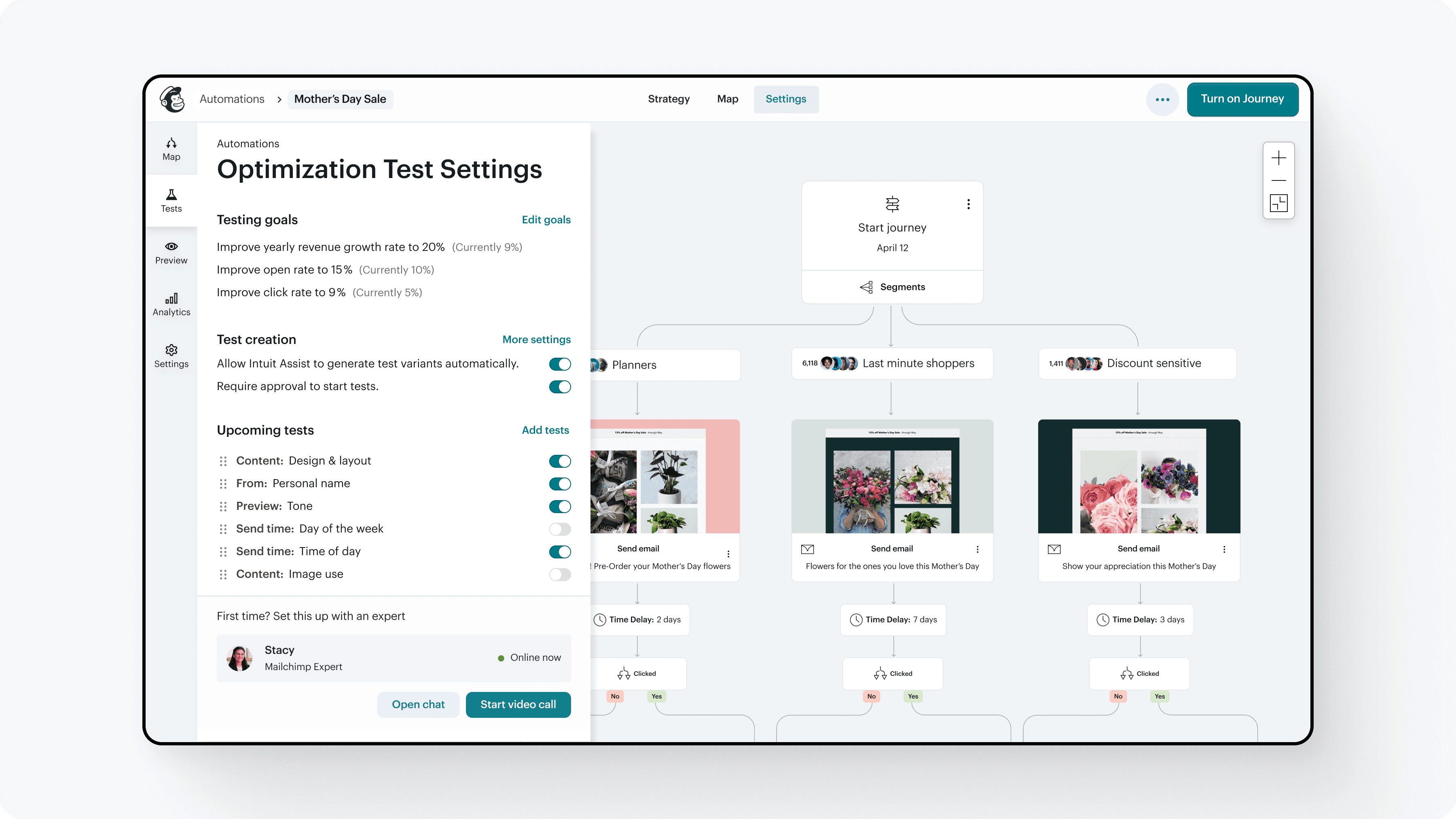

Solution

Final experience

A unified A/B testing experience that works across channels, supports simple to advanced tests, and provides clearer guidance and insights at every step.

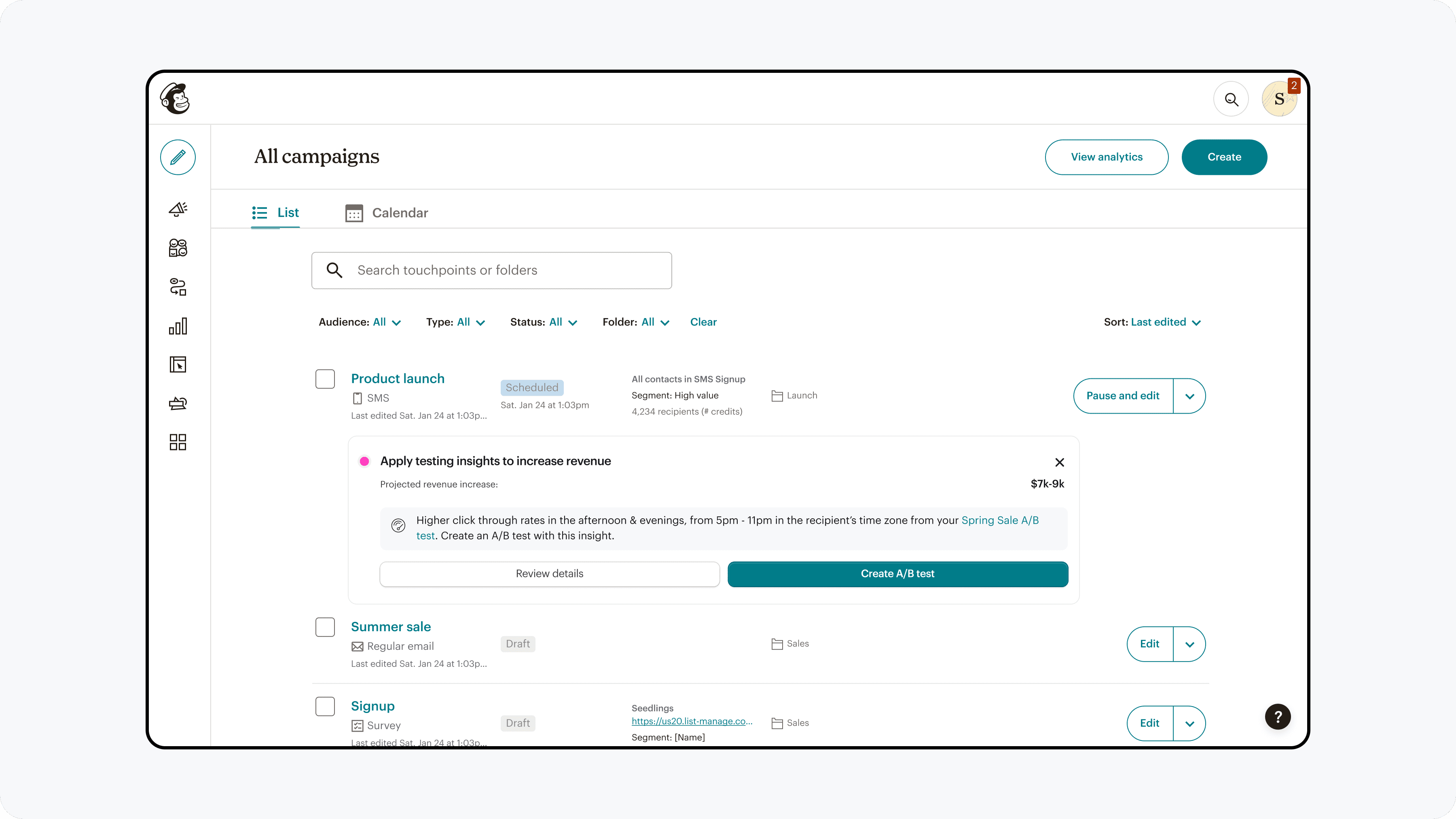

Discoverability

A/B testing entry points were aligned with marketers' natural workflow of creating a base campaign and then adding a variable to test.

Functionality

A/B testing capabilities were expanded & refined to enable and guide users to create effective tests. AI integration was refined from overwhelming choice, to select curated options with explaination.

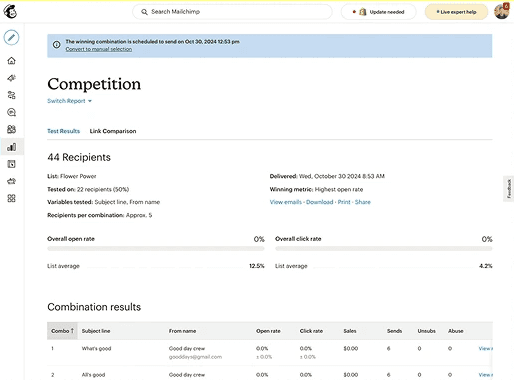

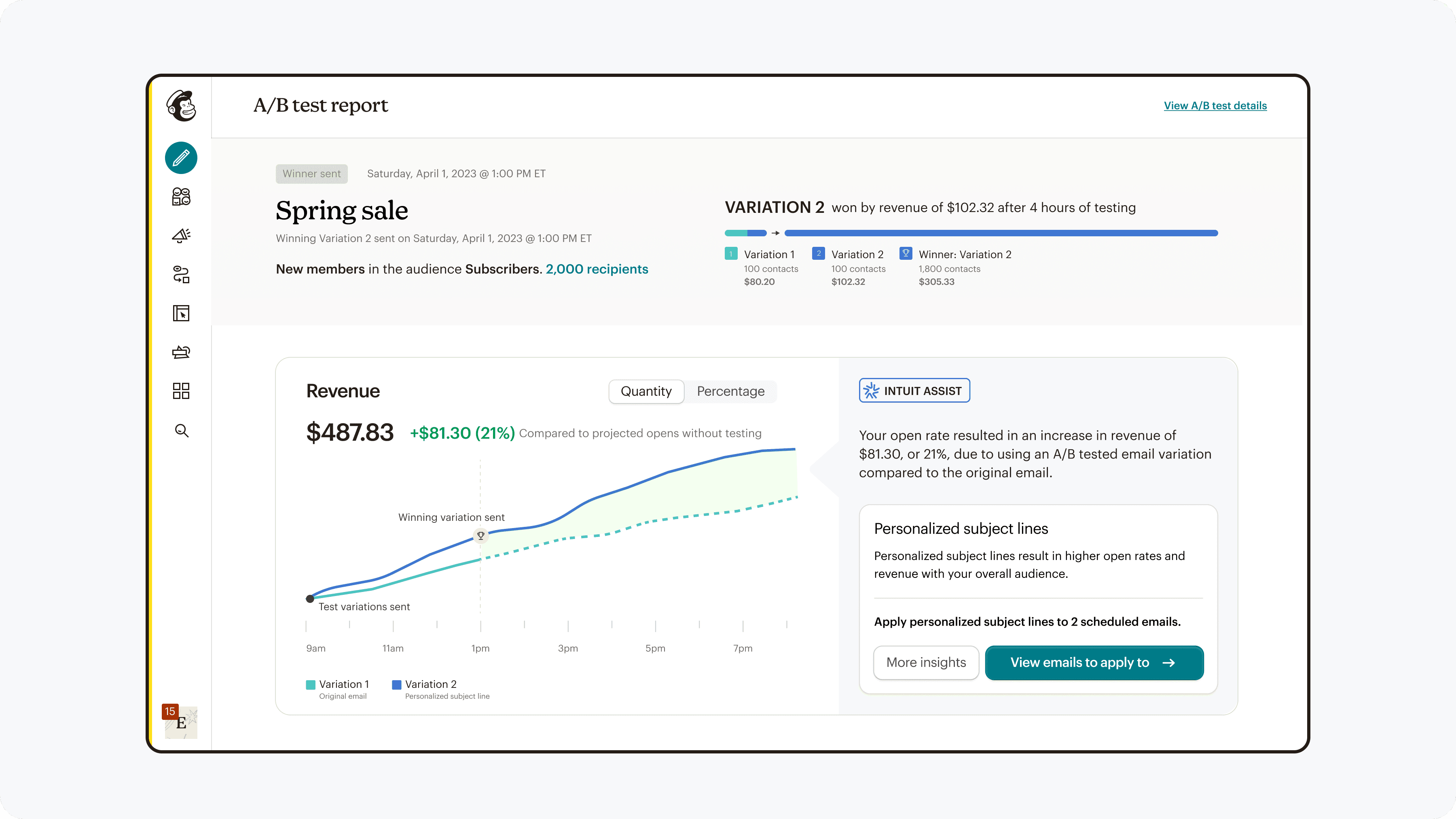

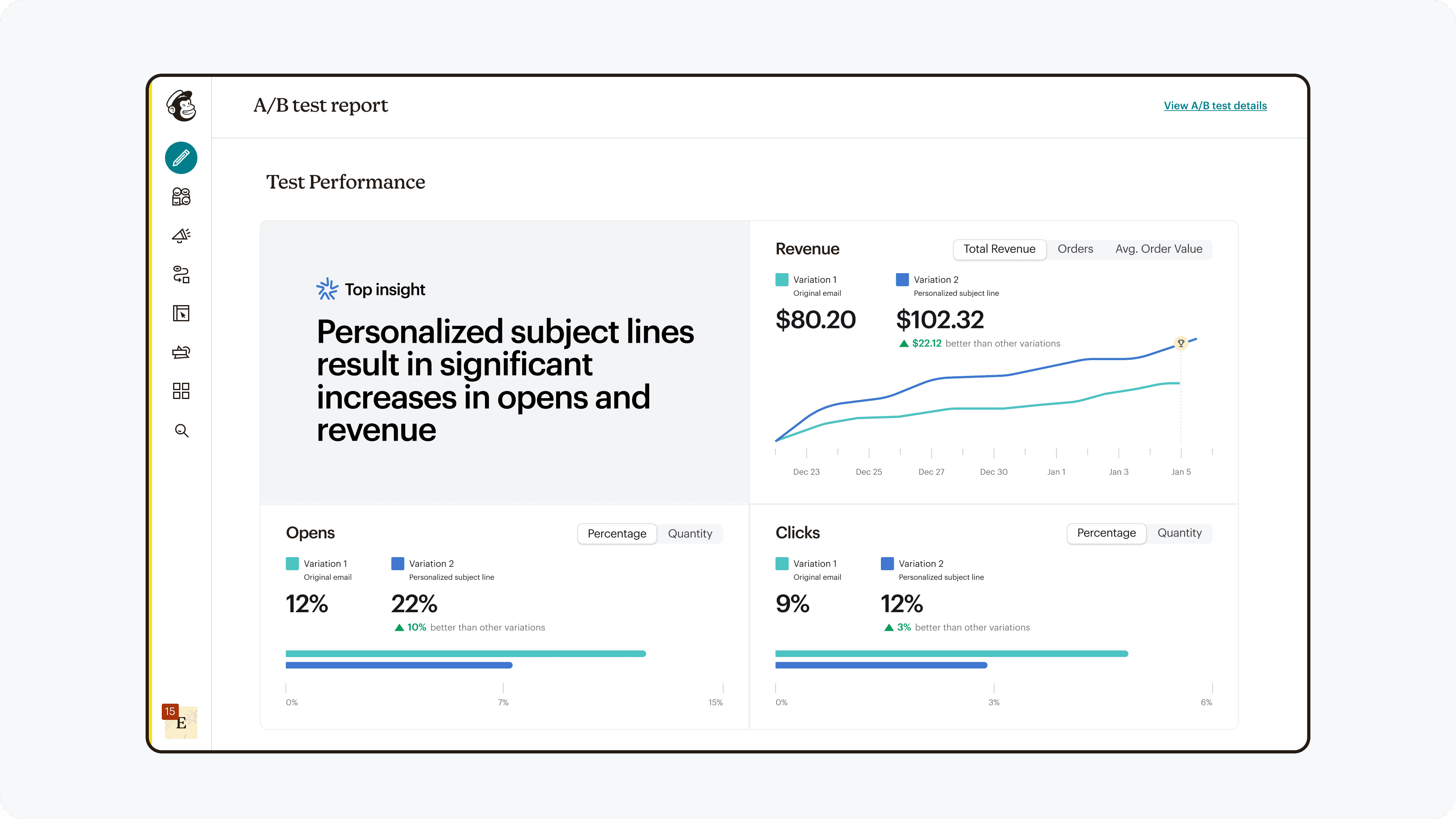

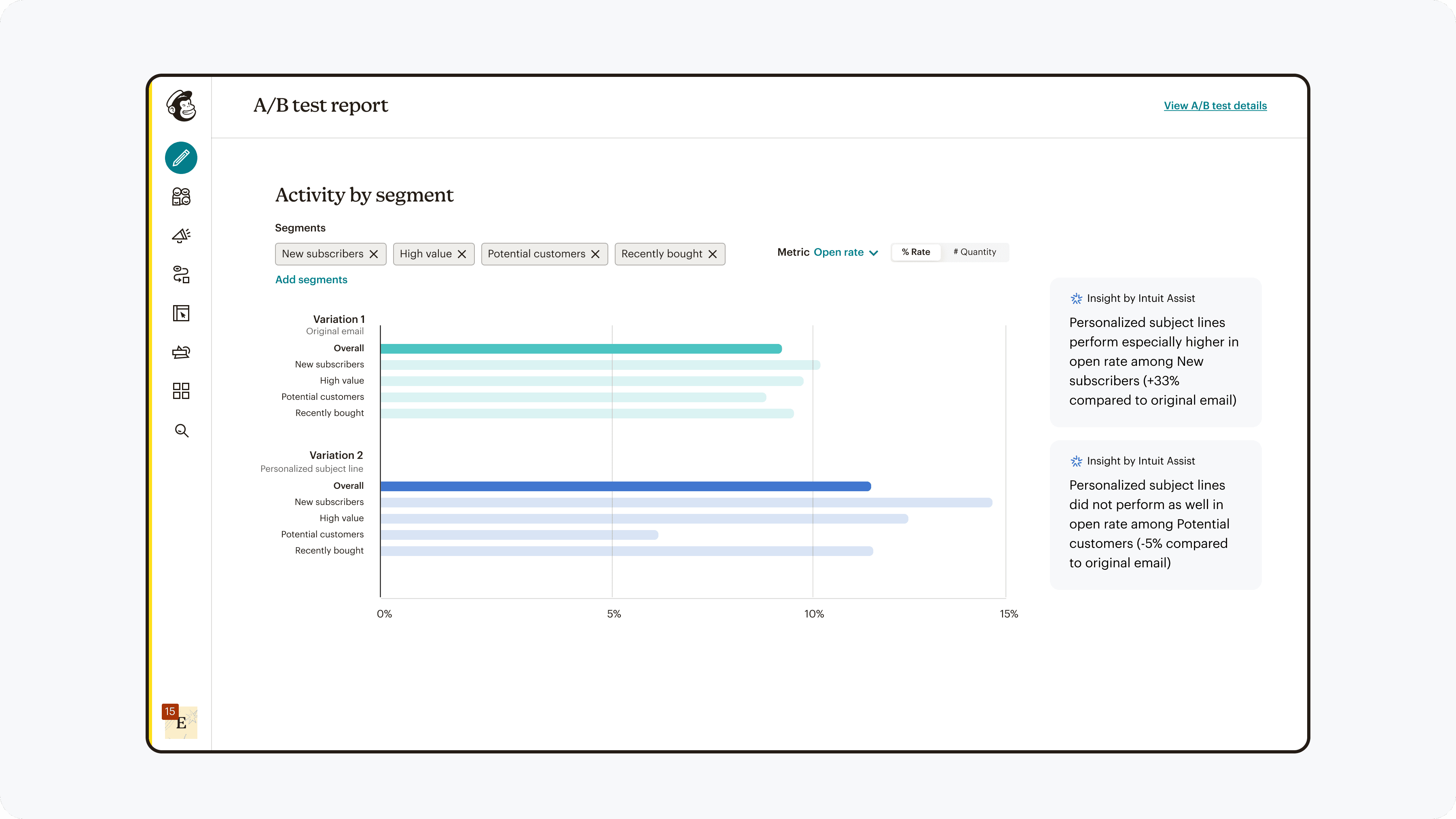

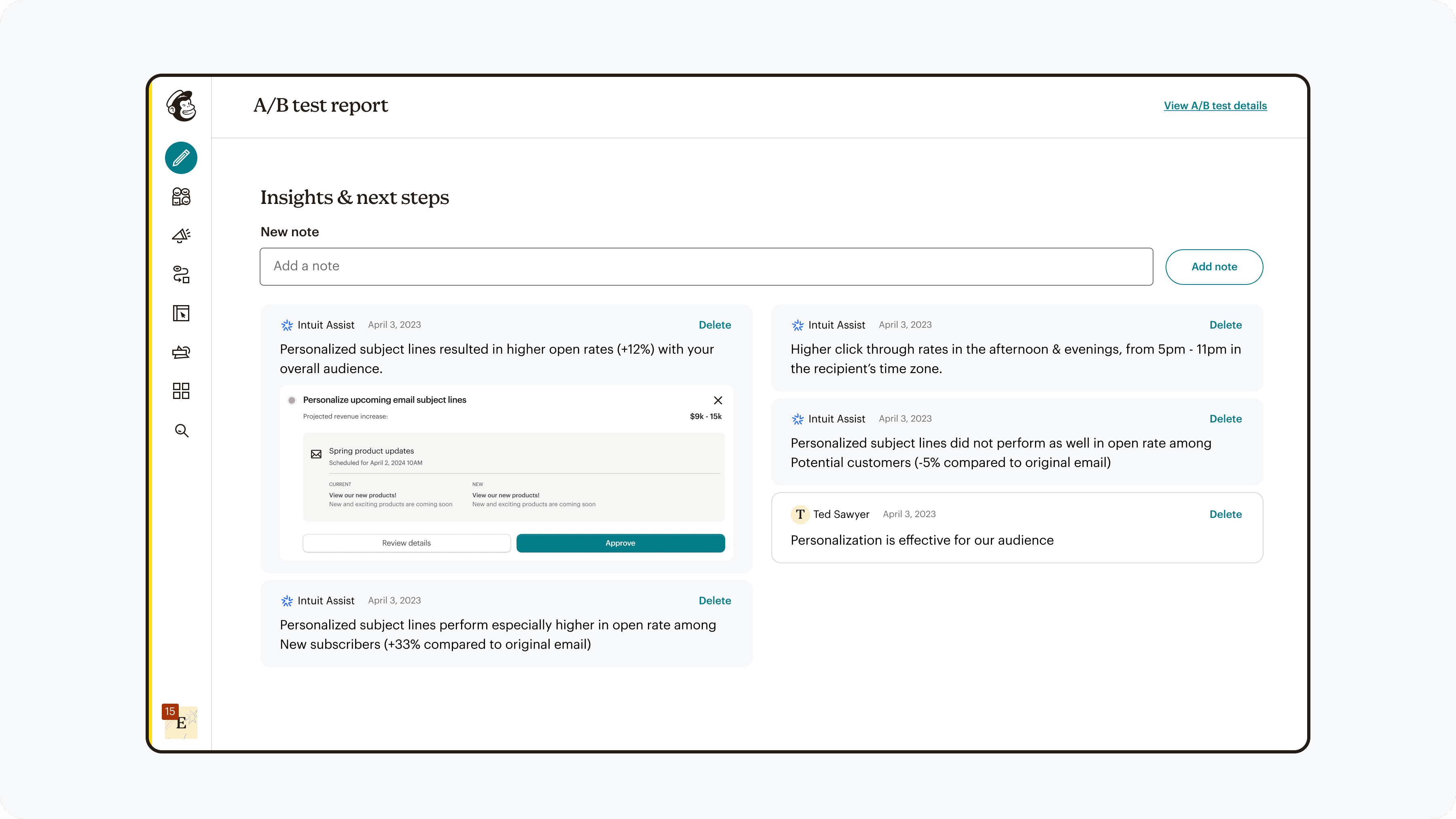

Interpretability

Results were presented in a way which allowed for easy analysis and direction for subsequent actions.

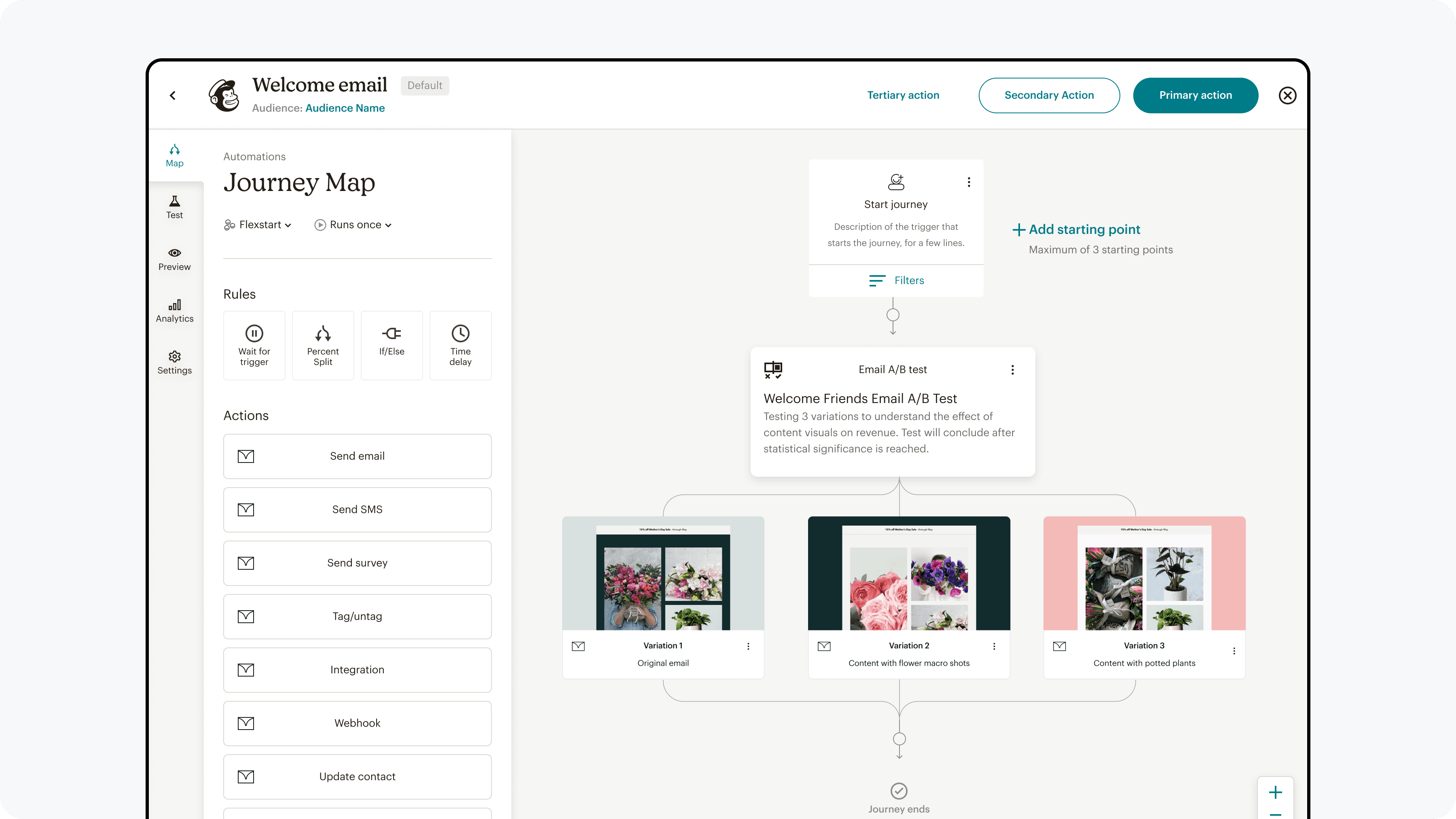

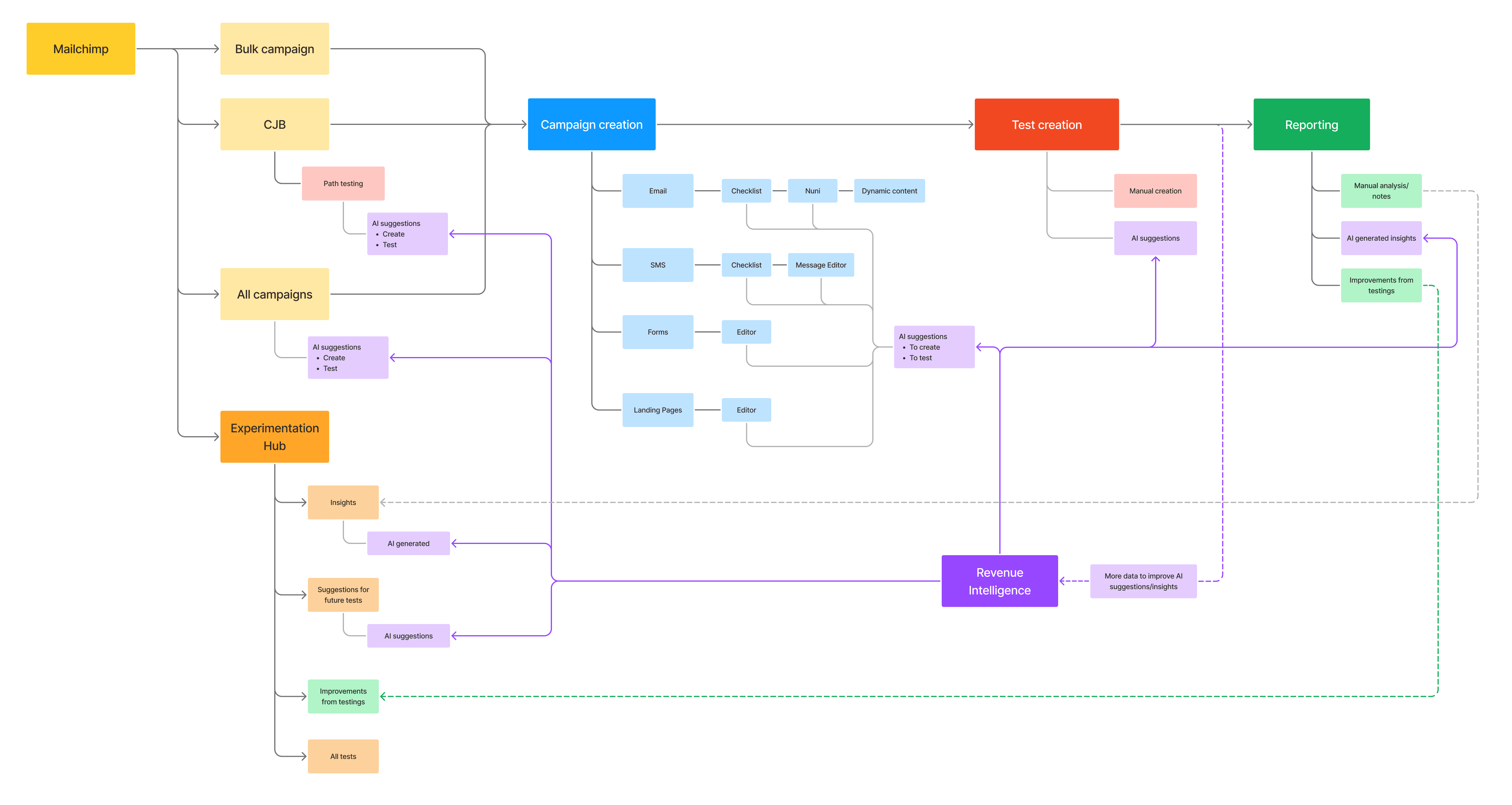

End to end experience across the all touch points

This project addressed the full lifecycle of running an experiment—from campaign creation to result interpretation and iteration. Experimentation spans across multiple surface areas, so a high level mapping was needed to ensure continuity throughout.

High level user flow through the product

Design system contribution

To ensure the experimentation framework could scale across Mailchimp products, I worked with the design systems team to translate key components into reusable patterns.

This included experiment configuration modules, comparison views, and result visualizations.

By integrating these into the design system, other teams could adopt experimentation capabilities more consistently across the platform.

Design system documentation

Impact

The team was able to launch A/B testing for SMS, and afterwards for forms. After 3 months of collecting data for SMS, we found the following results:

Discoverability:

138% boost in exploration

Functionality:

9% lift in completion

Interpretability:

22x increase in retention (1% → 23%)

This shows that the changes were effective, and with monitored results for forms as well as deeper quantitative & qualitative research, can make any needed adjustments and continue to roll out to other marketing channels.

Reflections

Continual experimentation

Rather than treating research and testing as a phase, I learned to treat experimentation as an ongoing product capability. Running fast experiments—through in-product tests, unmoderated studies, and quick surveys—allowed us to quickly resolve conflicting internal perspectives and move decisions forward with evidence. This approach reduced debate cycles and helped us converge on stronger solutions faster.

Balancing simplicity & flexibility

One of the core challenges in designing experimentation tools is balancing flexibility with usability. While power users wanted advanced controls, most marketers struggled just to run their first test. We focused on a guided workflow that reduced cognitive load and helped users make better decisions step-by-step, while keeping the system extensible for future advanced features. This approach allowed us to improve adoption without sacrificing long-term platform flexibility.

Adapt to changing priorities

Product roadmaps evolve, and strong work needs to survive those shifts. To make the project resilient, I documented the experimentation framework and incorporated key interaction patterns into Mailchimp’s design system. This allowed teams to adopt parts of the solution incrementally even when priorities changed. Designing for reuse ensured the work continued to influence the product beyond the initial project scope.