Overview

Led the redesign of Mailchimp’s experimentation platform to help marketers run effective A/B tests

Role

Lead Product Designer

Team

Product Manager

Engineers (4)

Company

Mailchimp

Experimentation

Timeline

2024 - 2025

Key contributions

End-to-end product ownership of design strategy and execution, including MVP, vision state, prioritized roll outs, & sunset plan.

Strategic alignment & leadership buy-in by highlighting issues & opportunities, securing new team formation & a prioritized roadmap.

Actionable user insights through prioritized research methods ranging from moderated interview sessions, heuristic evaluations, competitive research, surveys, & usability tests.

Scalable design system for an multi-channel framework enabling consistent patterns across multiple platform areas.

Data-informed direction through in-product A/B testing and usability testing.

Problem

Although experimentation is critical to marketing, very few Mailchimp users were using A/B testing

Through data analysis, only 0.34% of Mailchimp users were using A/B testing, much lower than the expected industry average of

95% of users had not used A/B testing in Mailchimp

This created a competitive risk as users churned to platforms with better experimentation workflows.

Interviews revealed 3 core areas for why users were not testing:

Discoverability

Entry points were misaligned with natural workflows, with 87% of users unaware of testing features.

Functionality

The current capabilities were limiting & confusing, with unintuitive patterns, inability to test most campaign types, lack of previews, & inconsistent UI.

Interpretability

Results were obscure & difficult to act upon, as reports were missing key data points & formatted without user intention.

Research

Uncovering behaviors, drivers & blockers, while validating with testing & experimentation

Each stage of the process required different methodologies that aligned goals, timelines, and efficacy.

20+ moderated interviews

Past research consolidation

Current usage data analysis

Competitive research

Heuristic walkthrough

Customer service tickets

7+ concept & usability tests

In product A/B testing

Guerrilla research

Collaboration with subject matter experts

Card sorting

Surveys

Insights gathered informed foundational direction, guided user behavior, & improved usability. Following are a few key insights from research.

Insight 1

A/B testing vary vastly in complexity

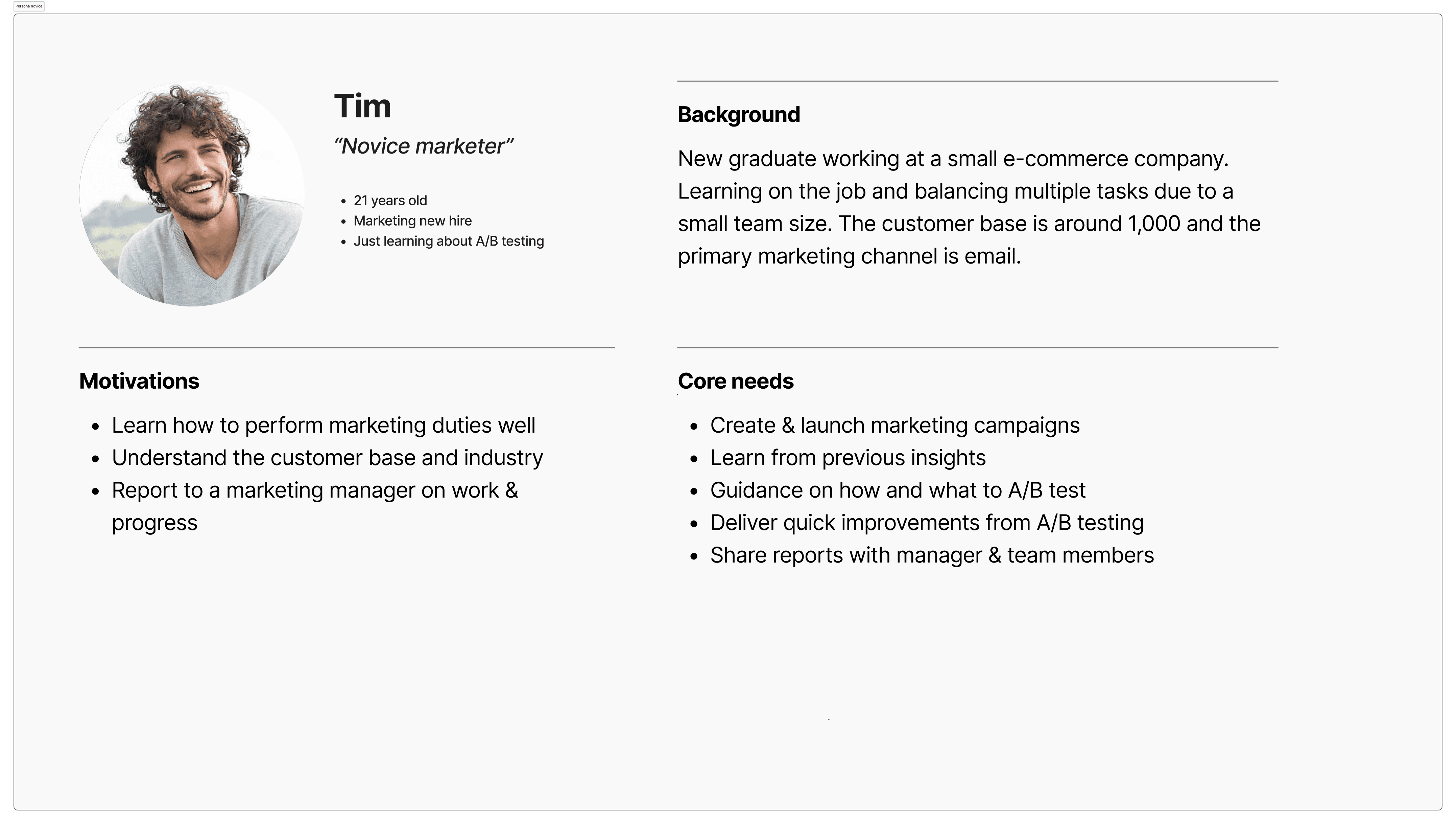

A/B testing needs evolve with a marketer's experience, with early career marketers needing more guidance & simplicity to learn about their general audience, & advanced marketers needing more flexibility to test more specific hypotheses.

Personas were created to guide testing flows through both of these equally important perspectives.

Personas of novice & advanced marketers

Insight 2

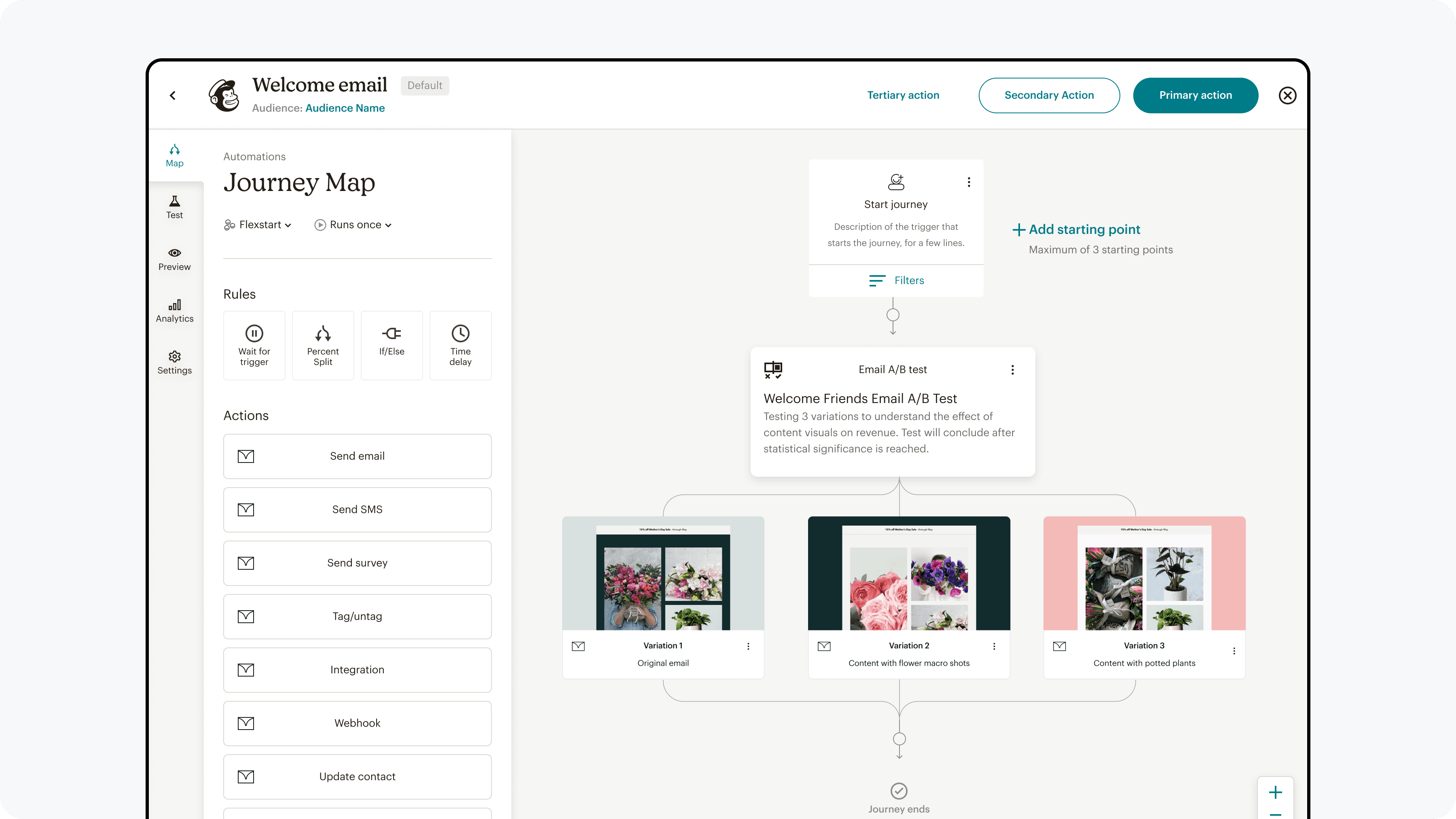

The base campaign is defined before adding test variations

Users were observed to create base campaigns first to meet their core campaign needs, then adding test variations to test hypotheses.

The journey map reflected this flow to guide experiments and eventual placement of when users enter the test creation flow.

Journey map of the process

Insight 3

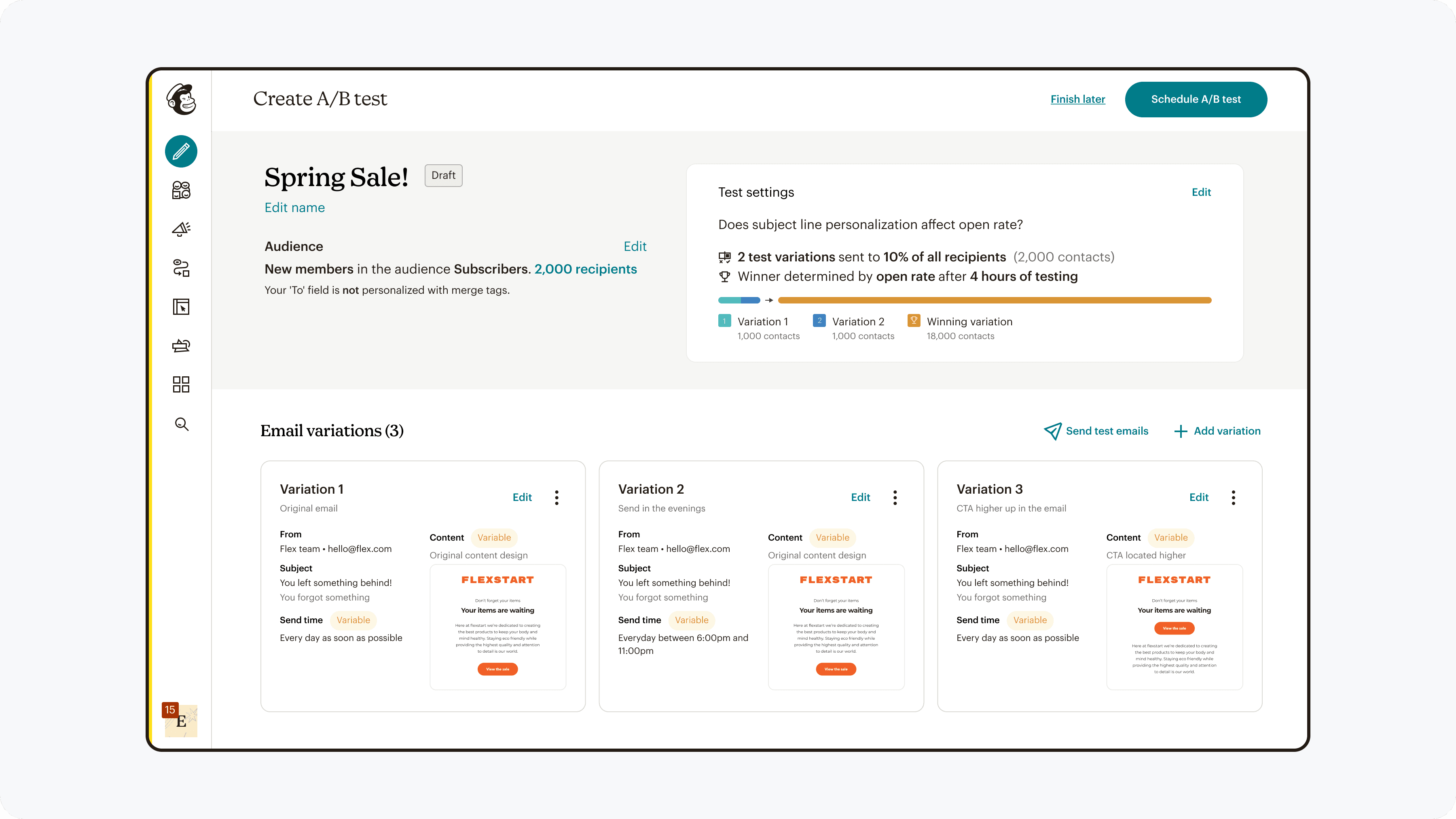

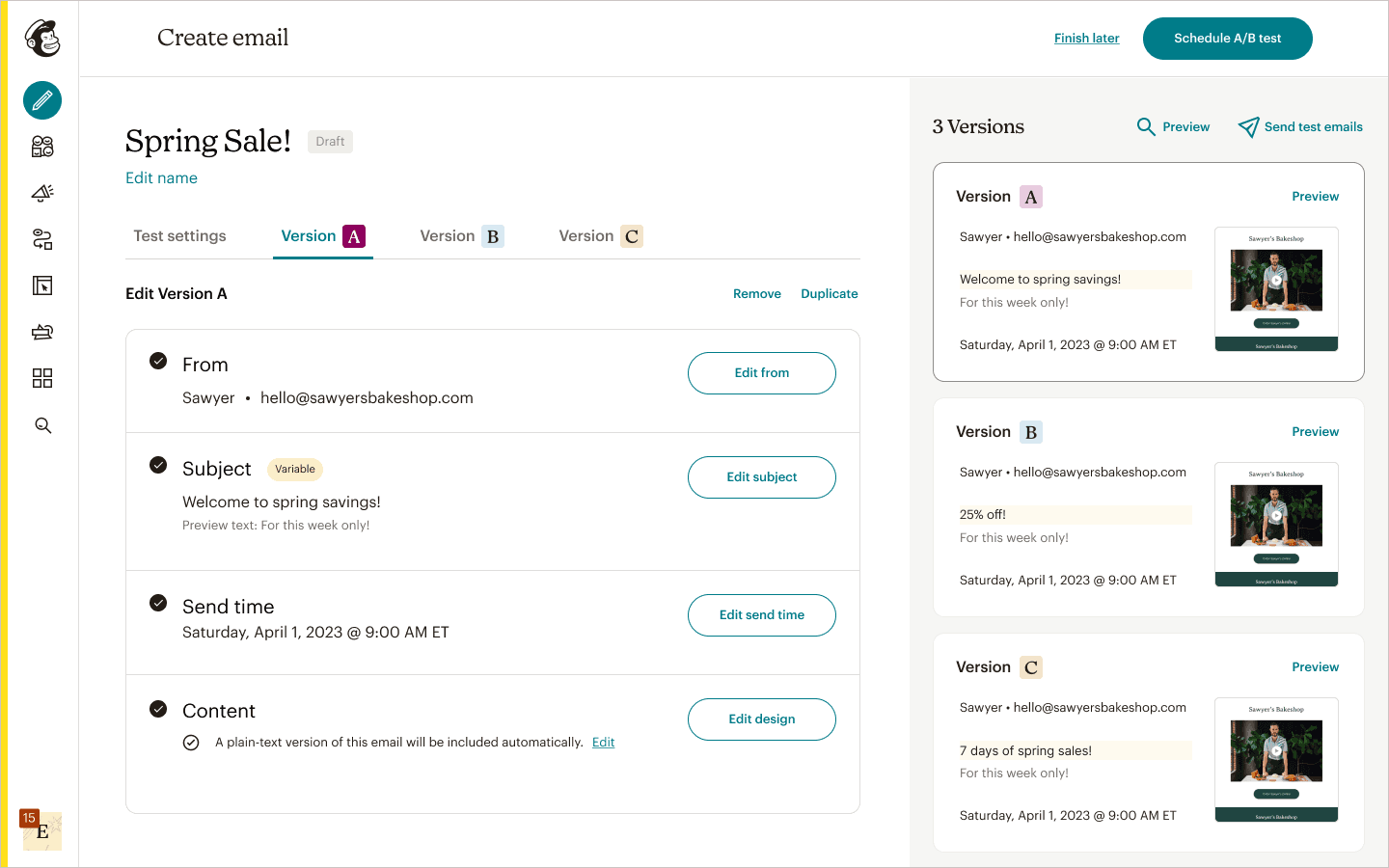

Campaign test variations are independent from each other

Users have a mental model of sending out individual emails that can be freely edited, which disproved the previous model of users sending out a single email with variants only in a specific field.

User interviews & concept testing tangible flows solidified this insight to help guide future decisions.

Testing tangible flows of 1) single email with 3 field variants, & 2) 3 emails with varying fields

Decisions

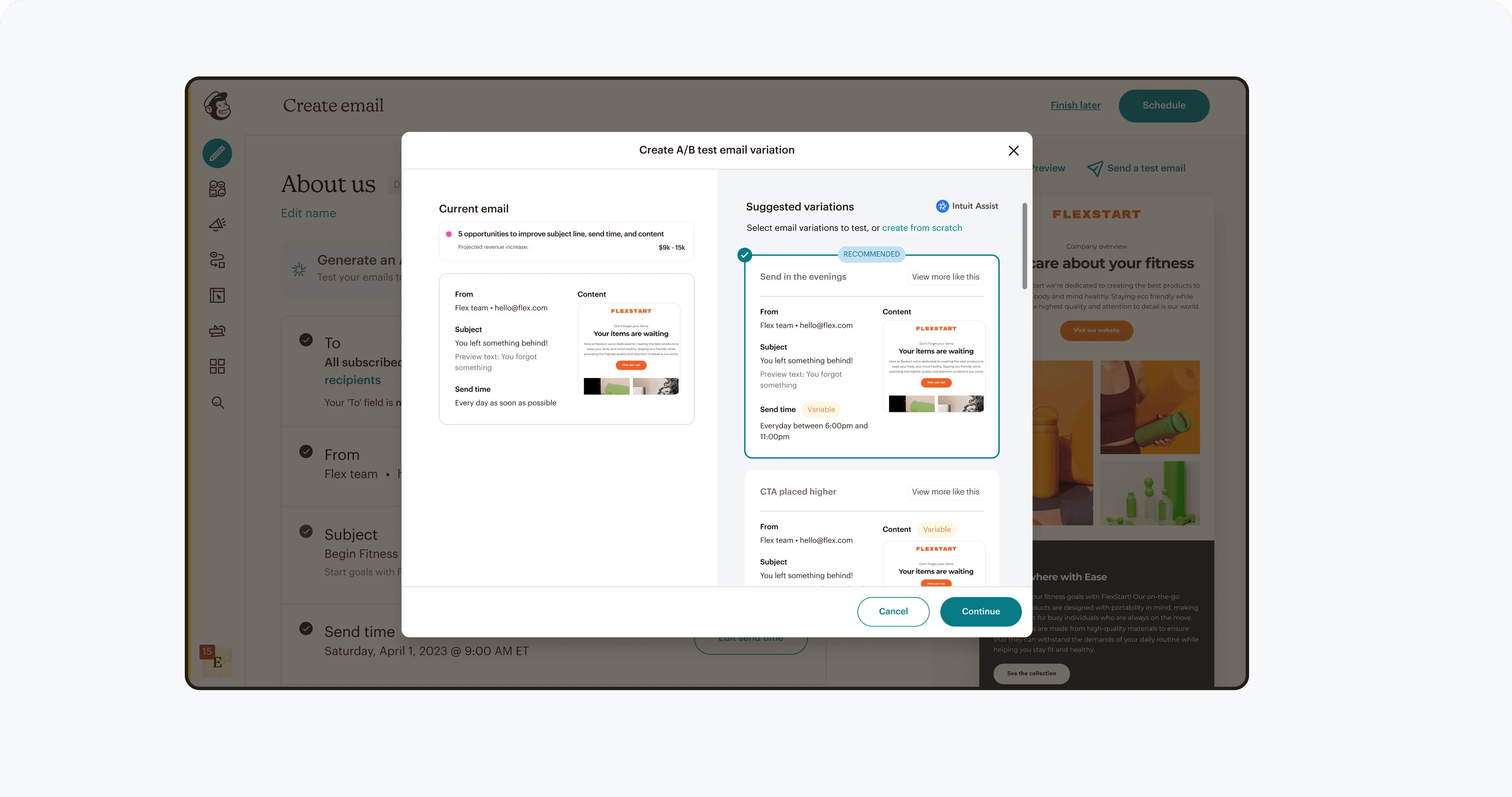

Gaining confidence on foundational decisions & navigating tradeoffs with product & engineering

Key decisions that had large experience & engineering implications needed to be made backed by qualitative & quantitative data.

User insights, team empathy, & goal alignment helped decide what compromises to make between design, product, & engineering.

Decision 1

Entry point placement to improve explore rates

Experimentation for low lift, quick discoverability increase was conducted by placing the entry point at various spots earlier in the current funnel and on the campaign creation page.

A/B test results & moderated usability testing led to an updated user journey & placement of the entry point by the field input.

In product testing of various entry point locations

Decision 2

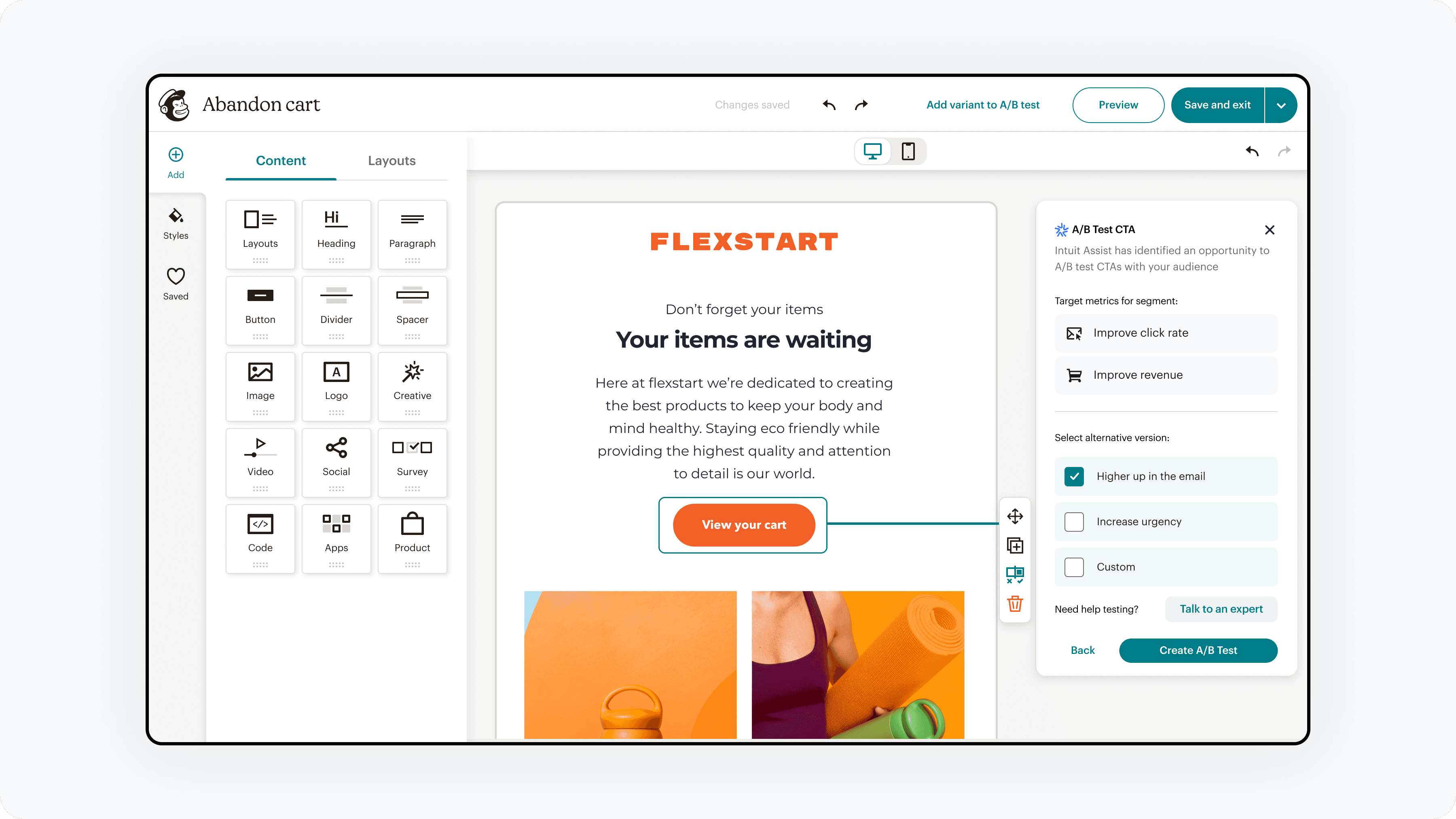

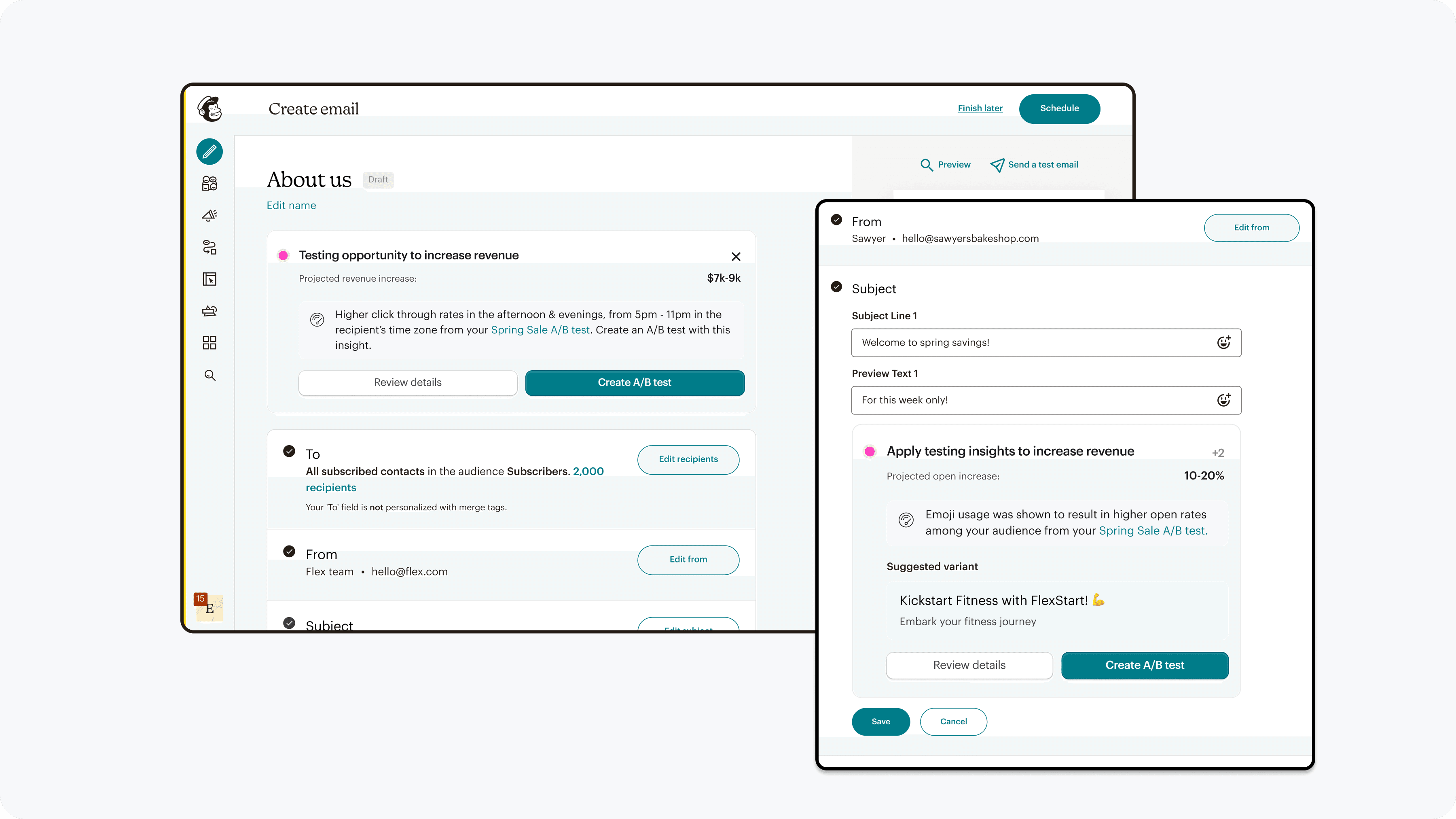

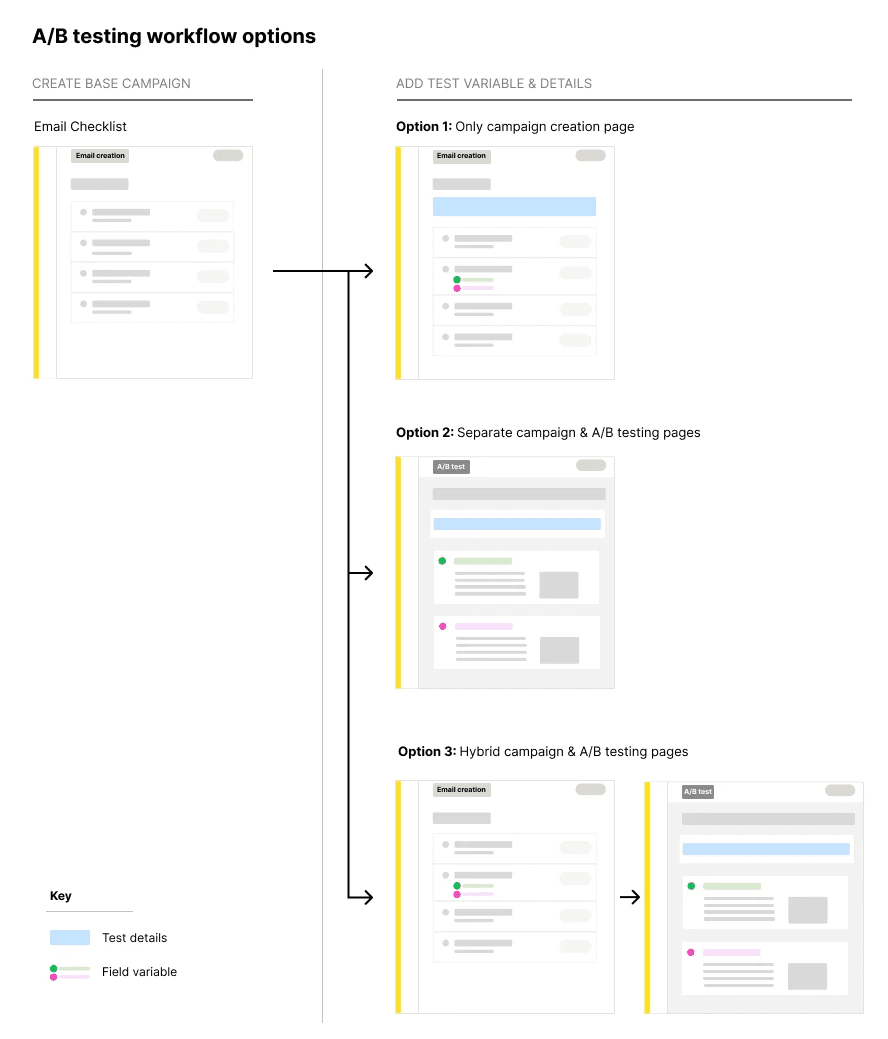

How to seamlessly create A/B tests of varying complexity from campaigns

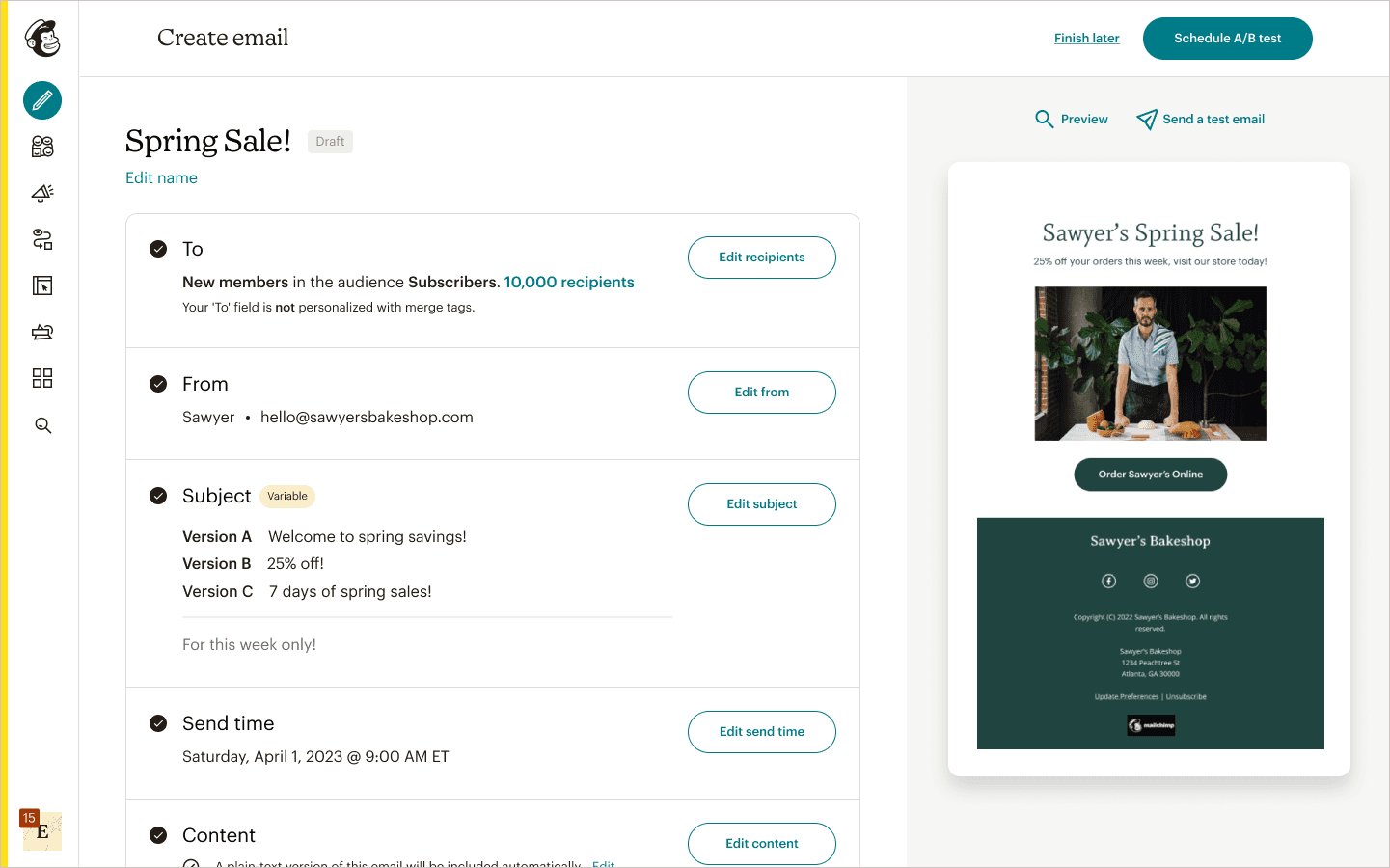

Two large challenges emerged, 1) how to enable both simple & complex test creation without overwhelming or limiting the user, & 2) how to seamlessly transition from campaign creation to test creation.

This design decision informs a pattern that needs to scale to differing campaign creation flows, information architecture, & when to utilized past learned behaviors or introduce new ones.

Through multiple rounds of team co-creation sessions, design reviews, feedback from subject matter experts, & usability testing, a balanced hybrid approach between adding variables on the campaign page & test details on a dedicated review page resonated with 85% of users in the final test.

3 different workflow options

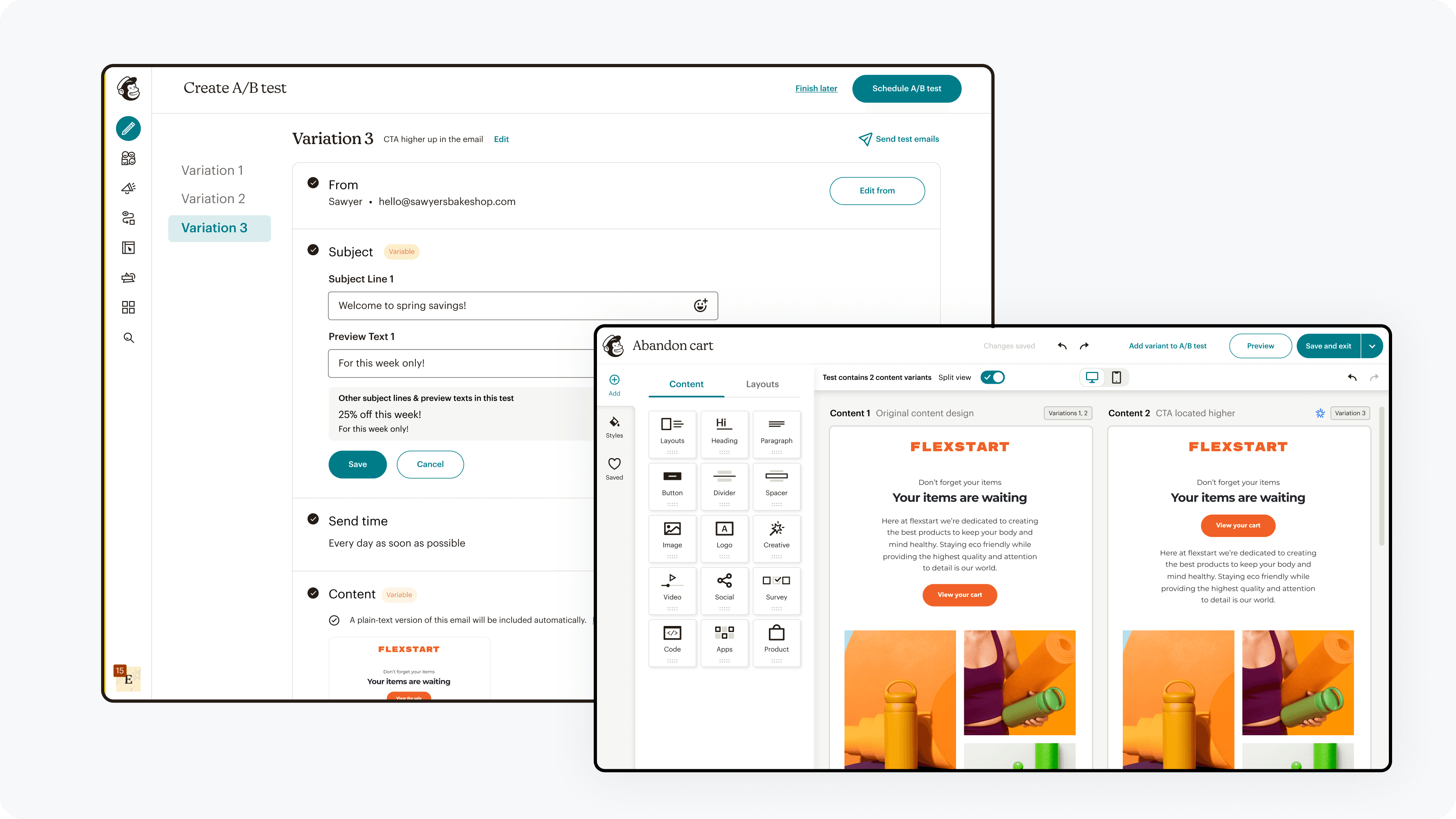

Decision 3

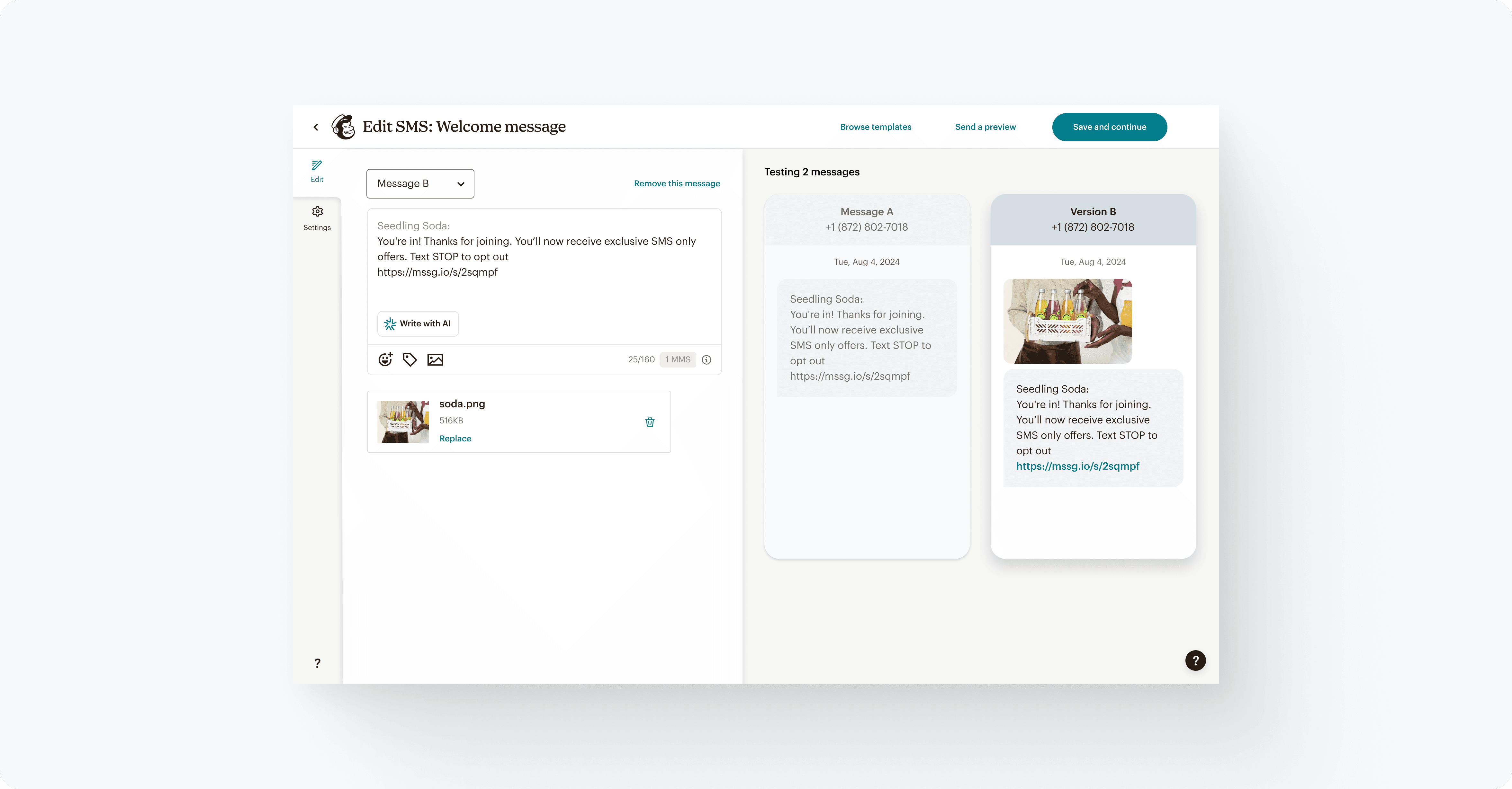

Facilitating comparison during creation & review

Observation & asking deeper questions in contextual interviews revealed users constantly switched between windows to gain confidence in the variables they were creating and the final campaigns to be sent & tested.

Through concept testing & A/B testing in product, we found 85% of users expressed greater confidence with seeing comparisons during the creation & review flow.

Providing a way to quickly compare versions

Other design decisions & tradeoffs

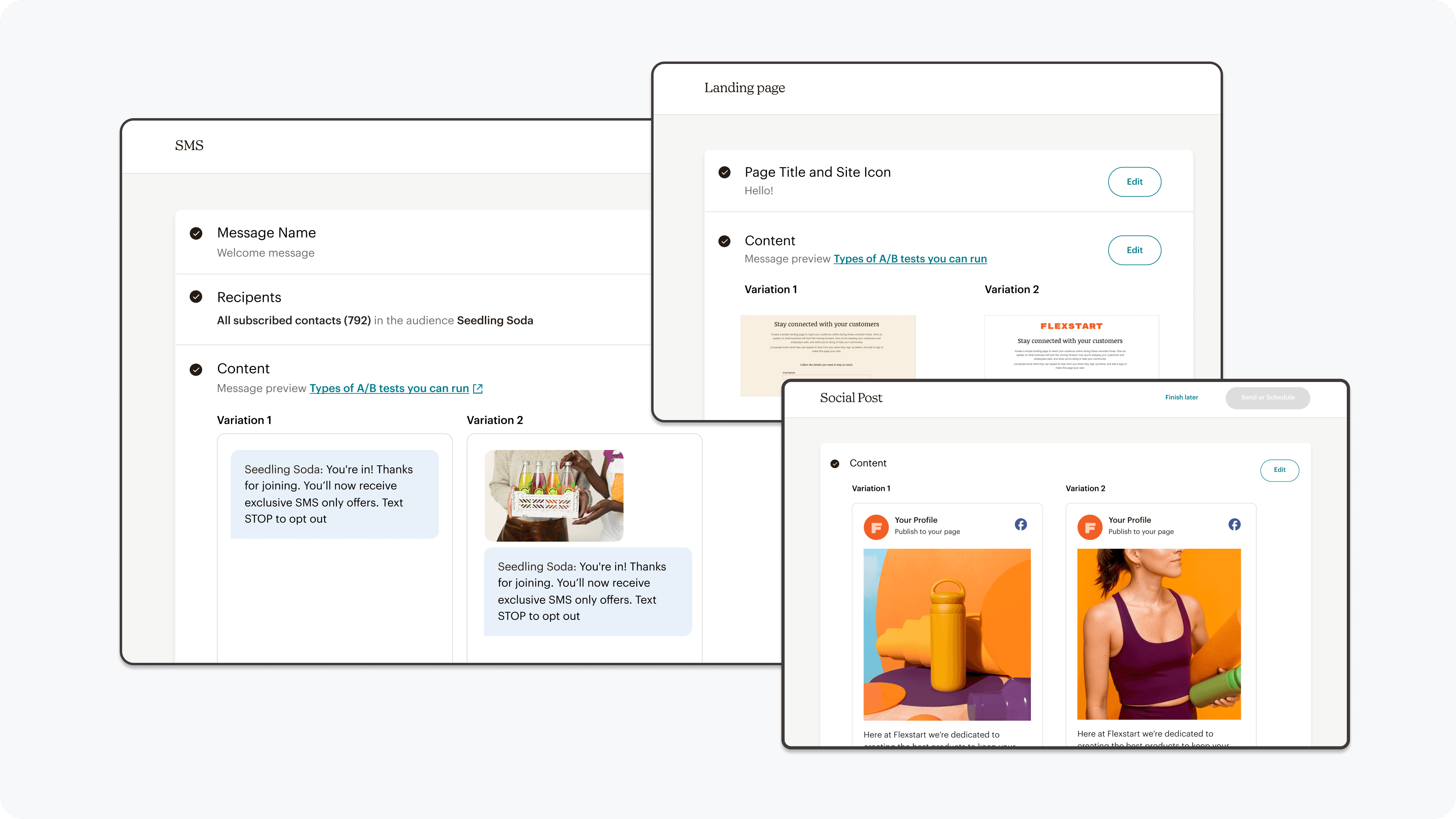

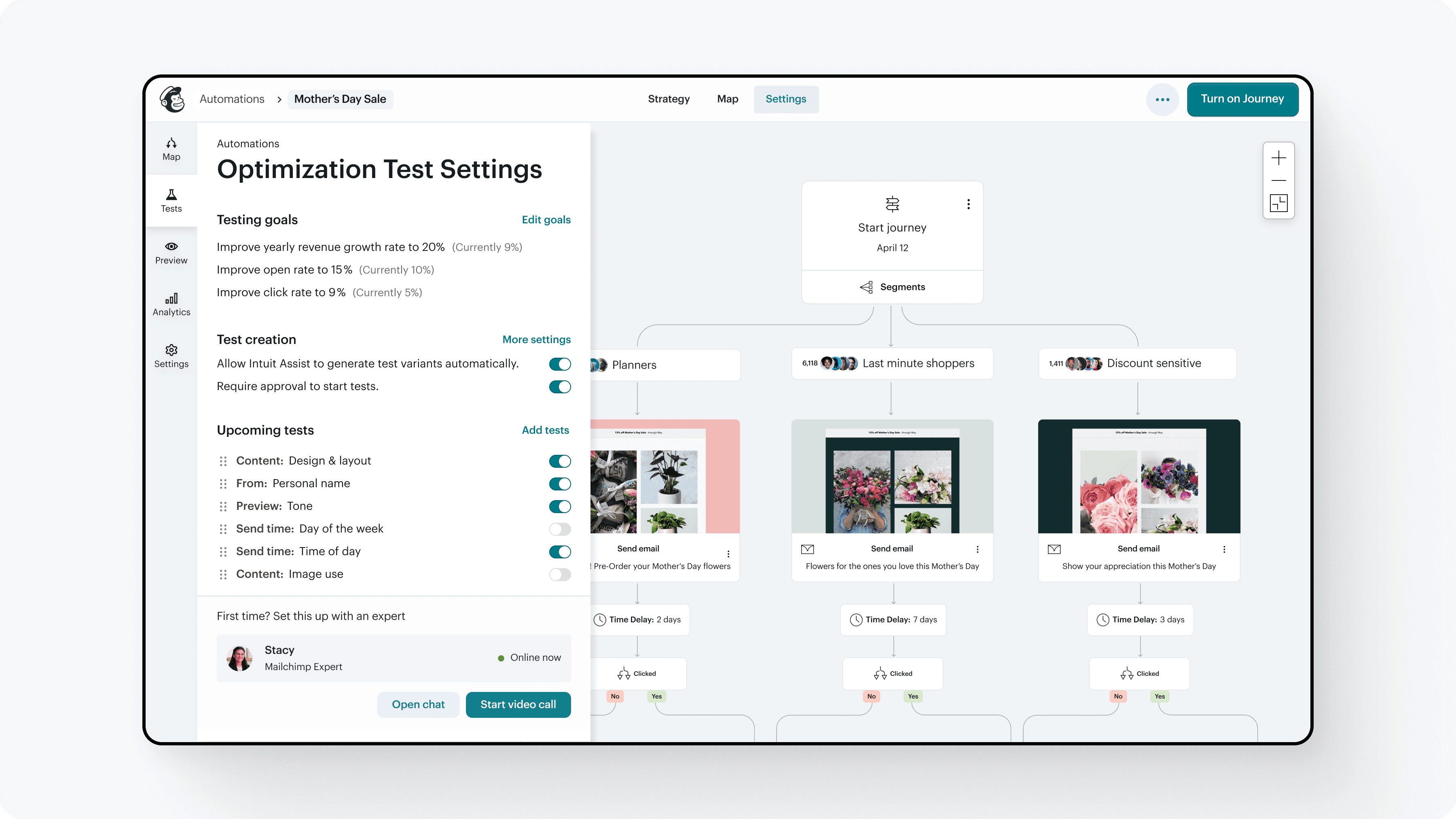

Launching on SMS before Email for quicker impact & feedback

Email was the core channel where A/B testing would have the largest impact, but it also had the most complex infrastructure and a longer development roadmap.

To reduce risk and learn faster, we launched the experimentation workflow first on SMS campaigns. This allowed us to validate the core workflow and user behavior before expanding to email.

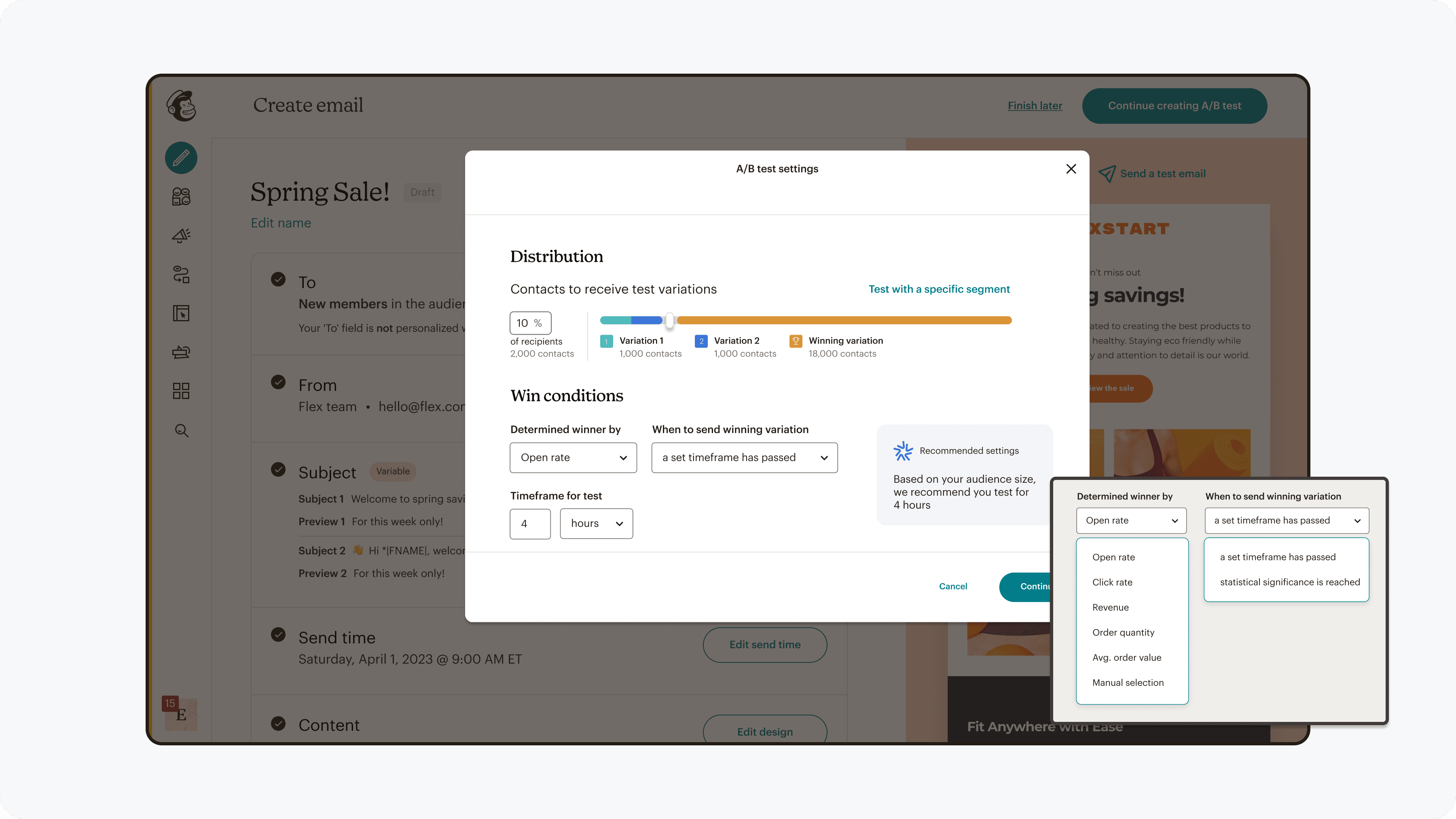

Reducing experiment send time justified engineering cost

Initially a 3 hour send time for test campaigns, feedback from users presented to engineering leadership led to an engineering spike to reduce send time to 30 minutes (83% improvement).

Limited automated win conditions, but including manual selection of a winner

Supporting multiple automated win criteria at launch required complex backend data pipelines that would significantly delay the release.

Instead of delaying the feature, we launched with a simpler model where users could manually review results and select a winning variant. This allowed marketers to still run the experiments they intended.

Solution

A unified A/B testing experience that works across channels, supports simple to advanced tests, and provides guidance and insights at every step

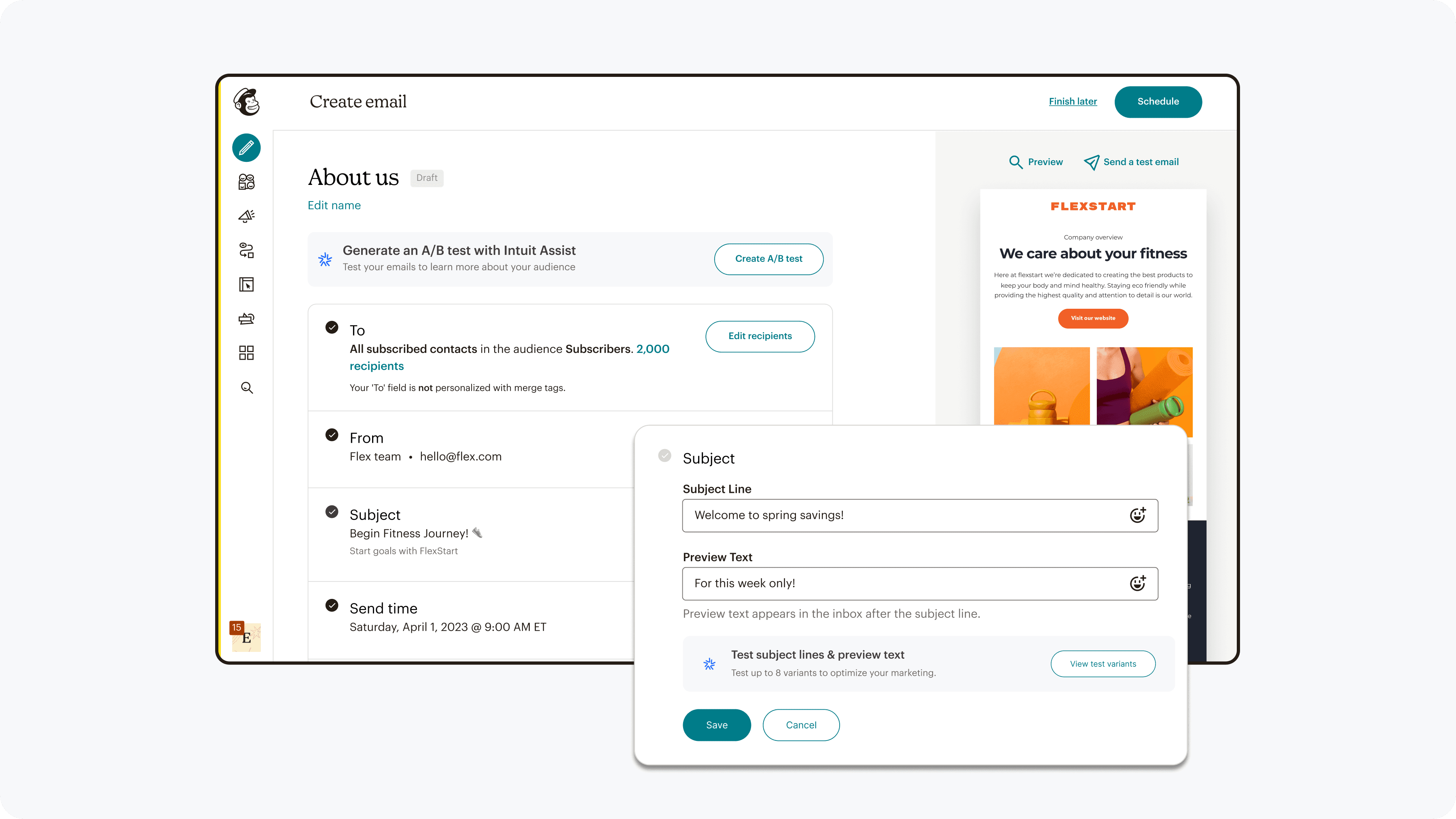

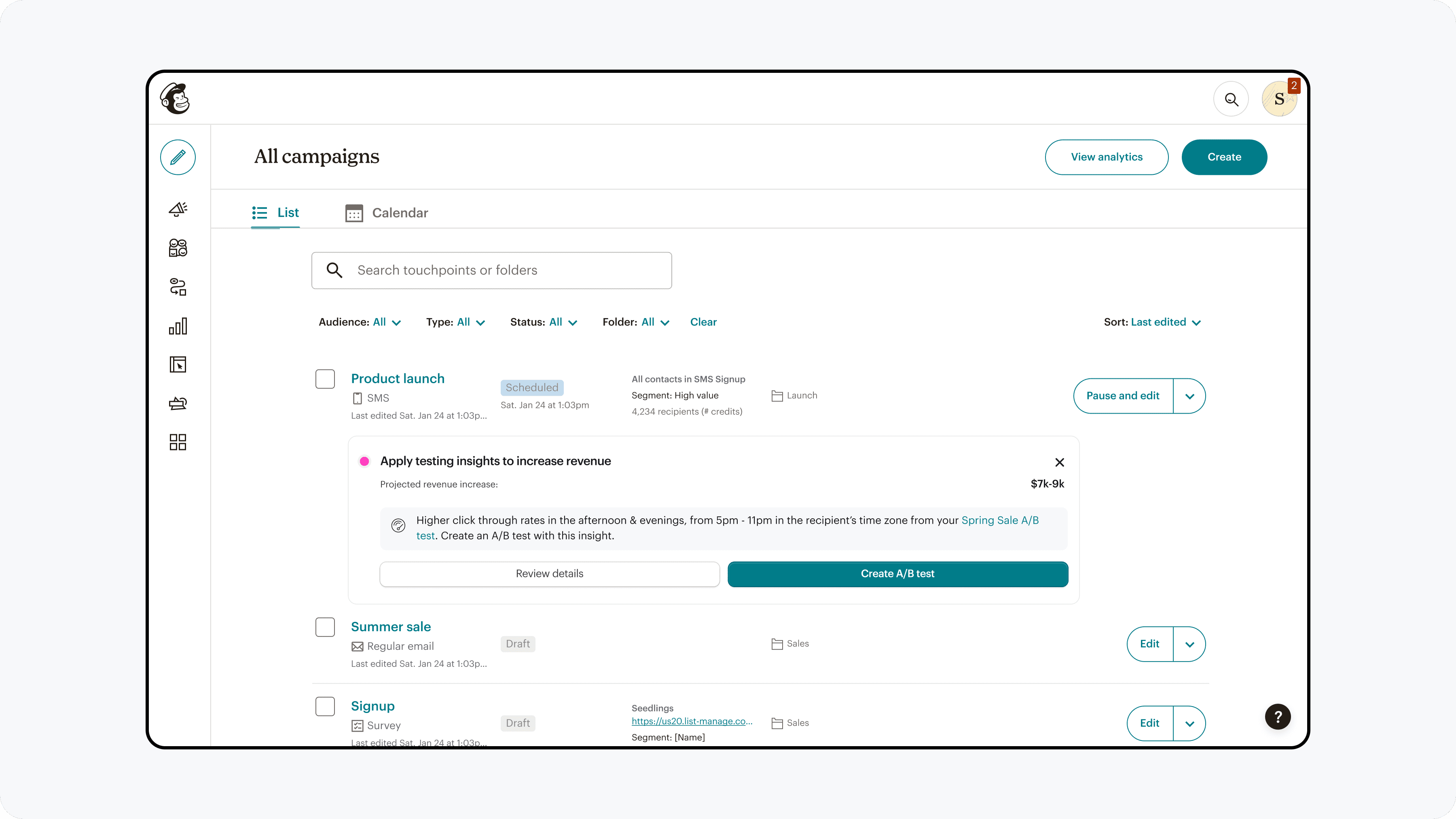

Improved Discoverability

A/B testing entry points were aligned with marketers' natural workflow of creating a base campaign and then adding a variable to test.

Improved Functionality

A/B testing capabilities were expanded & refined to enable and guide users to create effective tests. AI integration was refined from overwhelming choice, to select curated options with explaination.

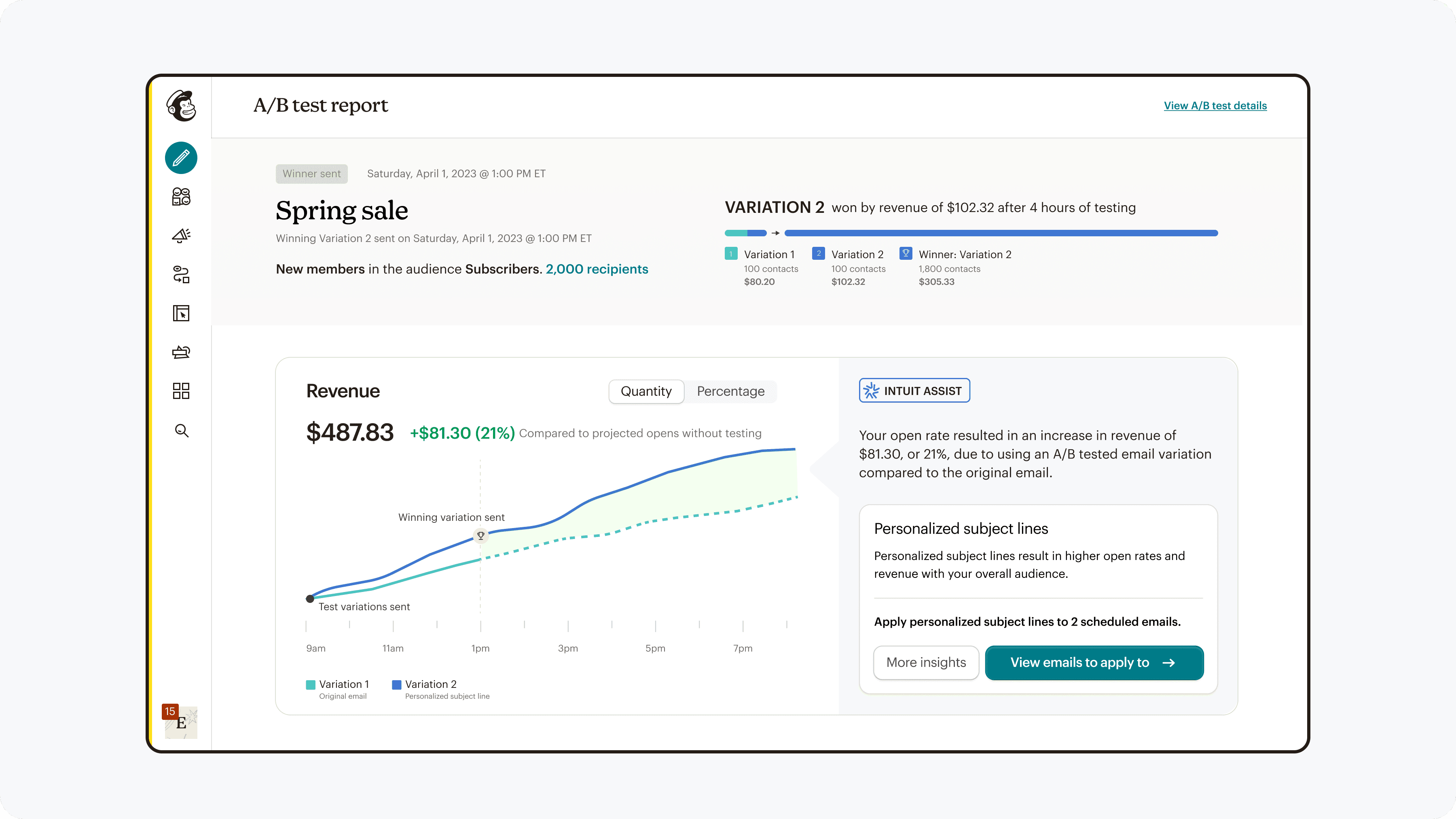

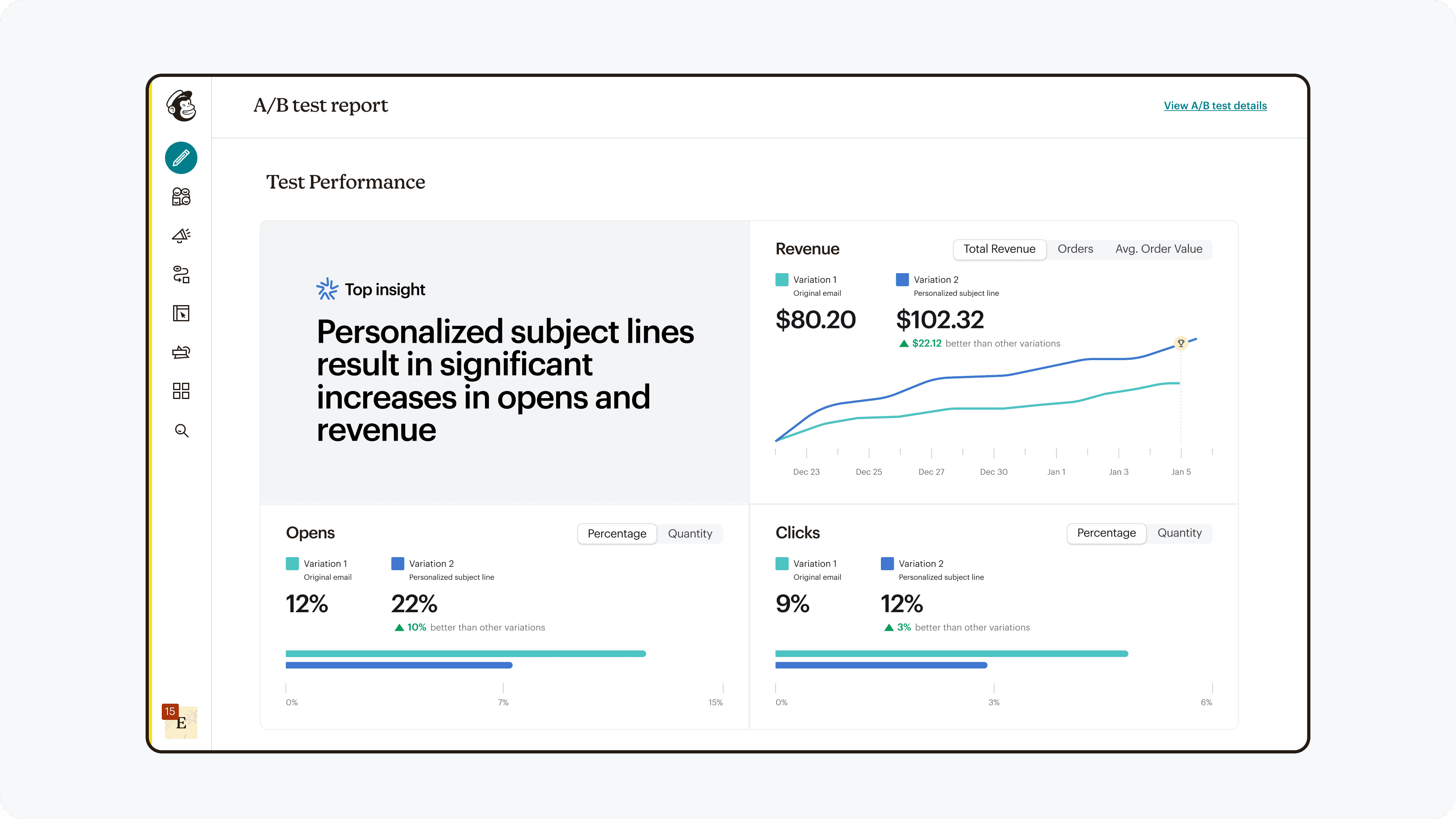

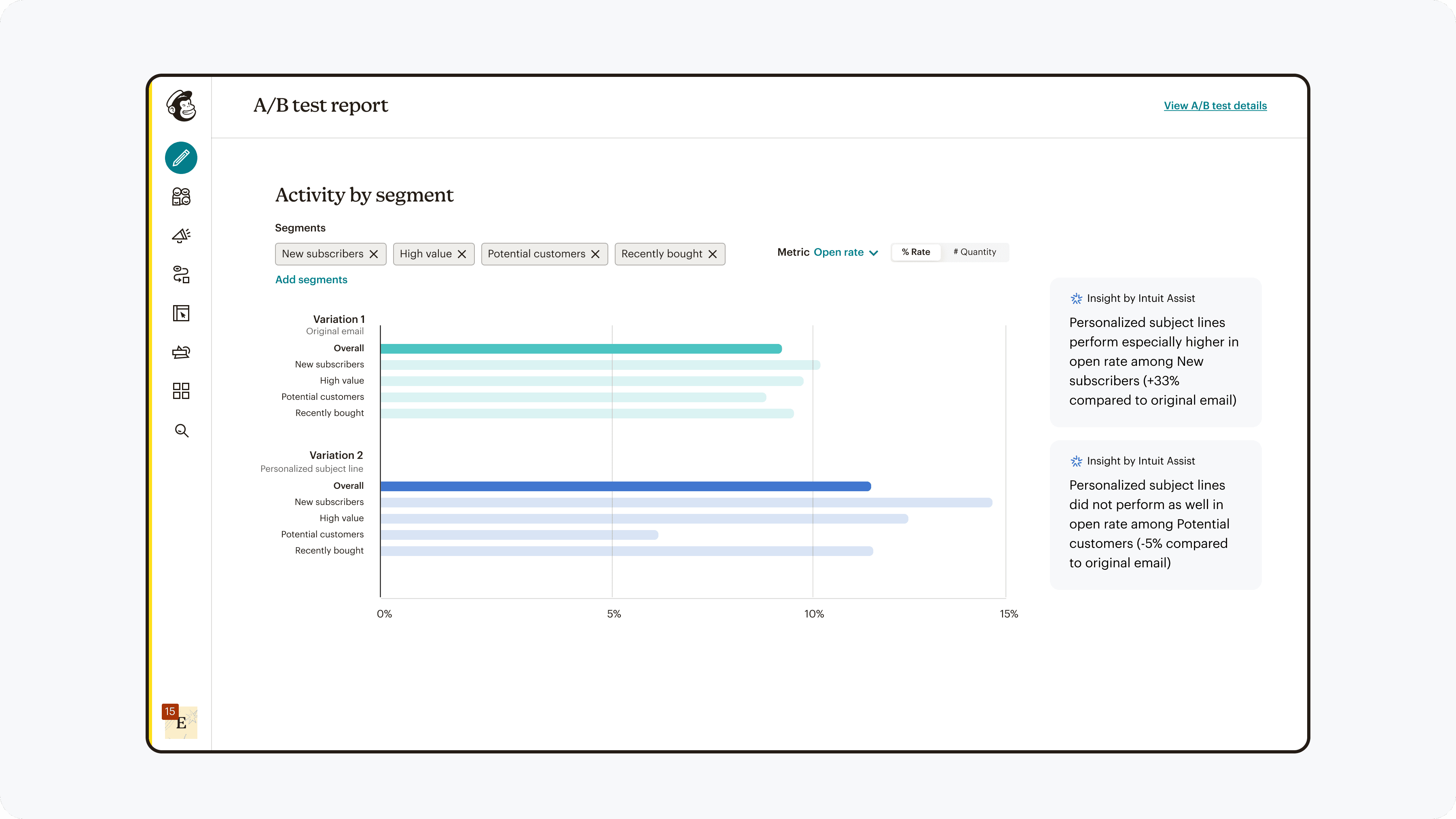

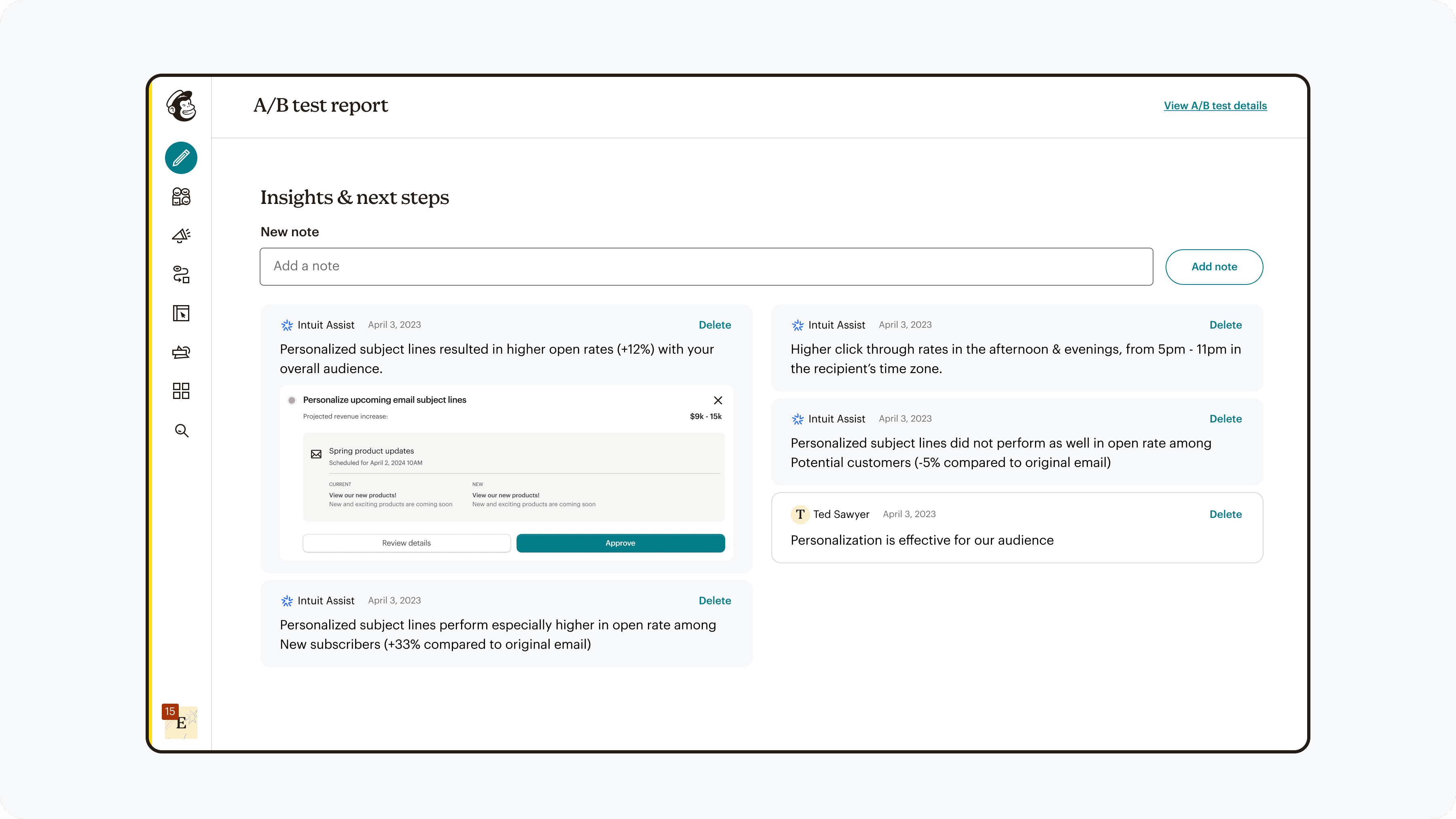

Improved Interpretability

Results were presented in a way which allowed for easy analysis and direction for subsequent actions.

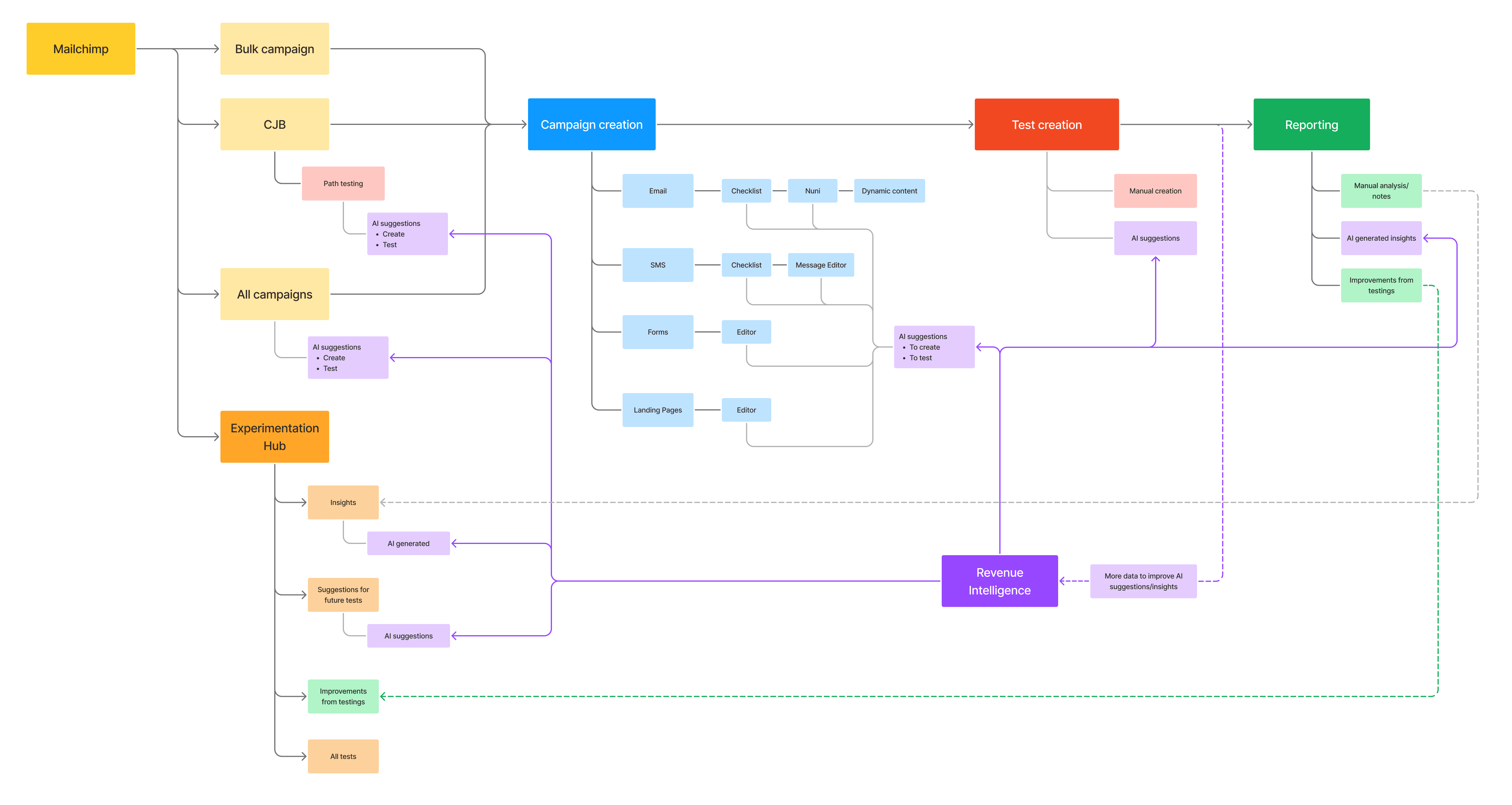

End-to-end experience across the all touch points

A site map contextualized the full lifecycle of running an experiment and how it spanned across multiple surface areas.

High level site map

Design system contribution

Contribution to the design system created reusable patterns that could scale across the entire platform and be ready for use by any designer whenever testing features were add to the road map.

Design system documentation

Impact

The team was able to launch A/B testing for SMS, and afterwards for forms. After 3 months of collecting data for SMS, we found the following results:

Discoverability:

138% boost in exploration

Functionality:

9% lift in completion

Interpretability:

22x increase in retention (1% → 23%)

Reflection

Experimenting & adapting

Continual experimentation

Rather than treating research and testing as a phase, I learned to treat experimentation as an ongoing product capability. Running fast experiments—through in-product tests, unmoderated studies, and quick surveys—allowed us to quickly resolve conflicting internal perspectives and move decisions forward with evidence.

flexibility in solutions

One of the core challenges was negotiation with competing priorities & expectations. A deep understanding of users & company goals helped guide flexibility in approaching which design directions to fight for, and which ones to think creatively in finding compromises that still met user needs.

Adapt to changing priorities

Product roadmaps evolve, and to make this project resilient through them, I documented the experimentation framework and contributed patterns & components to the design system. This allowed teams to adopt parts of the solution incrementally even when priorities changed.